AI's Enhanced Empathy

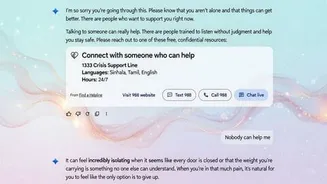

In a significant move towards user well-being, artificial intelligence is being equipped with more robust mental health support mechanisms. The focus is on

proactively identifying conversations that might indicate distress and swiftly connecting users with vital crisis resources. This initiative aims to create a more responsive and supportive environment within AI interactions, ensuring that individuals in vulnerable situations receive timely assistance. The underlying principle is to leverage AI's capabilities not just for information retrieval, but also for fostering a sense of safety and care.

Legal Scrutiny and AI

The integration of these new safeguards follows a serious legal challenge that has brought the ethical responsibilities of AI developers to the forefront. A wrongful death lawsuit has been filed, alleging that an AI chatbot played a role in a user's tragic end. This development underscores the profound impact AI can have and the urgent need for stringent oversight and accountability. The legal proceedings highlight the complex relationship between technology and human vulnerability, prompting a critical examination of AI's potential risks and the duties of those who create and deploy it.

Redesigned Crisis Response

A key innovation being introduced is a redesigned "Help is available" feature. This update is specifically engineered to recognize indicators of potential mental health crises within user conversations. Once such signs are detected, the feature is designed to offer immediate pathways to professional crisis care, thereby facilitating faster access to support. This proactive approach is a crucial step in ensuring that AI platforms can serve as a reliable safety net, offering critical interventions when users are most in need, and moving beyond mere information provision to active support.