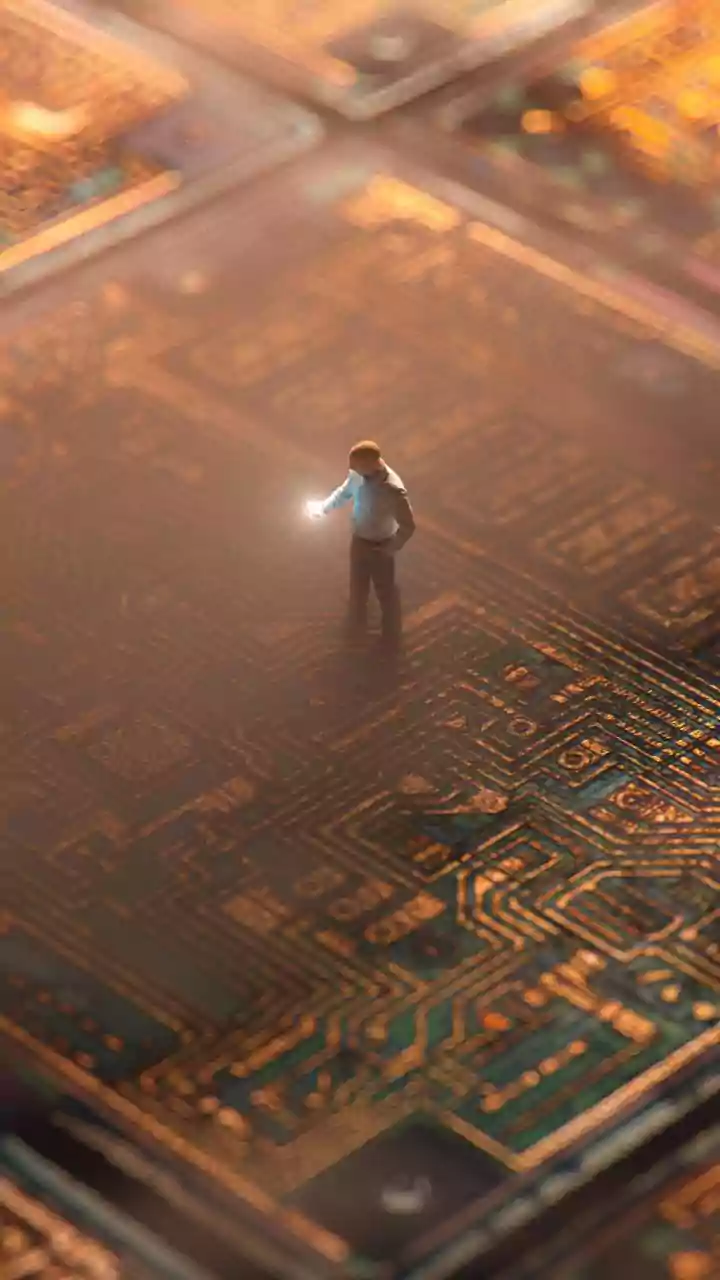

Strategic In-House Design

Meta Platforms is making a significant strategic shift in its technological backbone by investing heavily in the in-house design of its own artificial

intelligence chips. This endeavor complements their existing practice of acquiring off-the-shelf processors from industry leaders like Nvidia and Advanced Micro Devices. The core motivation behind this ambitious project is to create silicon tailored precisely to Meta's unique data processing requirements. By developing chips that are optimized for specific AI tasks, the company aims to achieve superior energy efficiency and more cost-effective operations. This approach is shared by other major tech giants, signaling a broader industry trend towards greater control and customization of AI hardware. The development team at Meta is dedicated to building chips that can handle the immense computational demands of its vast array of services, from social media platforms to emerging AI applications, ensuring a more streamlined and powerful operational capability.

The MTIA Chip Family

At the heart of Meta's custom silicon strategy lies the Meta Training and Inference Accelerator (MTIA) program, which is set to introduce a range of four new chips. The MTIA 300, the first of this new generation, is already actively deployed within Meta's infrastructure, notably powering its crucial ranking and recommendation systems. These systems are vital for personalizing user experiences across Meta's platforms. The subsequent chips, scheduled for release in 2026 and 2027, are designed with a forward-looking perspective. Specifically, the MTIA 450 and MTIA 500 are being engineered to excel at inference tasks. Inference is the process by which AI models, such as those powering conversational agents like ChatGPT, respond to user queries and requests in real-time. This focus on inference is driven by the observed surge in demand for such capabilities, as highlighted by Yee Jiun Song, Meta's vice president of engineering, who emphasized that this is their current primary focus. This phased rollout ensures a continuous improvement and scaling of Meta's AI processing power.

Optimizing for Inference

Meta's engineering teams are prioritizing the development of chips that can efficiently handle AI inference, a critical component of many modern AI applications. This focus is a direct response to the rapidly escalating demand for AI-powered services that require immediate responses to user inputs. While Meta has achieved some success with inference-specific chips in the past, its long-standing ambition to develop a powerful generative AI training chip, capable of building foundational large language models, has presented greater challenges. The company recognizes that custom-designed inference chips can lead to significant advantages in terms of power consumption and operational cost compared to general-purpose hardware. By optimizing silicon for this specific workload, Meta aims to provide faster, more responsive AI experiences for its users while managing the immense computational load. The company is meticulously designing entire systems around these new chips, incorporating advanced cooling solutions like liquid cooling to manage the heat generated by these powerful processors, ensuring sustained performance.

Infrastructure Expansion

The rollout of Meta's new in-house AI chips is closely linked to its aggressive expansion of data center infrastructure. The company plans to release its custom chips at roughly six-month intervals, a cadence dictated by the rapid scaling of its data center footprint. This rapid expansion is necessary to support the growing demands of its popular applications, including Instagram and Facebook, as well as to accommodate the increasing computational needs of AI services. Yee Jiun Song noted that this pace reflects the reality of how quickly their infrastructure is being built out to meet future demands. Meta's capital expenditure projections underscore this growth, with the company anticipating spending between $115 billion and $135 billion this year alone. This significant investment highlights Meta's commitment to building a robust and scalable AI infrastructure that can support its long-term vision and operational requirements across its global services.

Manufacturing and Partnerships

While Meta is spearheading the design of its cutting-edge AI chips, the manufacturing process relies on strategic partnerships with established industry players. The company collaborates with Broadcom for certain aspects of the chip designs, leveraging specialized expertise in particular components. The actual fabrication of these sophisticated processors is handled by Taiwan Semiconductor Manufacturing Co. (TSMC), a leading foundry renowned for its advanced manufacturing capabilities. This approach allows Meta to focus on innovation and architecture while entrusting the complex and capital-intensive fabrication to a trusted partner. Furthermore, Meta has recently secured substantial deals with major chip suppliers such as Nvidia and AMD, agreeing to purchase billions of dollars worth of their chips. These agreements, including significant multi-year deals, ensure Meta has access to a broad range of high-performance computing resources while simultaneously developing its own custom silicon solutions for specialized AI tasks.