TurboQuant's Efficiency Breakthrough

Large language models (LLMs) rely on a "cheat sheet" of past calculations, known as the KV cache, to avoid recomputing information during conversations.

As conversations lengthen, this cache expands, consuming substantial GPU memory. Google's TurboQuant tackles this challenge head-on through an ingenious two-part compression strategy. The first component, PolarQuant, ingeniously converts high-dimensional vectors, fundamental to AI processing, from standard Cartesian coordinates (like "3 blocks East, 4 blocks North") into a more concise polar form (equivalent to "5 blocks at a 37-degree angle"). This drastically reduces the numerical data needed to represent the same information. Following this, the Quantized Johnson-Lindenstrauss (QJL) technique applies a final 1-bit error correction pass. This meticulous cleanup process addresses any minor inaccuracies introduced by PolarQuant, enabling AI models to operate efficiently at an astonishingly low 3-bit precision without any degradation in output quality or the need for extensive retraining. In practical terms, Google demonstrated an impressive 8x speedup in calculating attention logits—the critical step where an AI model discerns the relevance of different parts of a prompt—when using Nvidia H100 accelerators.

Market Reactions and Memory Segments

The announcement of TurboQuant's memory-saving capabilities triggered a noticeable reaction across the memory chip market, though analysts were quick to differentiate its impact. The core efficiency gains of TurboQuant are specifically tied to the inference stage and the KV cache, meaning the primary pressure is directed towards NAND flash memory. High-bandwidth memory (HBM), a crucial component found within AI accelerators like those from Nvidia and powering the training infrastructure of tech giants such as Microsoft and Meta, was largely deemed unaffected. Analysts like Jake Silverman from Bloomberg Intelligence and Morgan Stanley indicated that HBM demand, alongside DRAM produced by Micron, would likely remain robust. Conversely, companies with significant exposure to NAND flash, such as Kioxia and Sandisk, experienced the most substantial market repercussions from the news, seeing their stock values decline.

The Jevons Paradox Effect

Counterbalancing the initial stock market jitters, a contingent of analysts proposed a more optimistic long-term outlook, citing the 19th-century economic principle known as the Jevons Paradox. This theory, originally observed in the context of coal consumption, posits that increased efficiency in resource utilization often leads to an overall increase in consumption, as the newfound efficiency unlocks new applications and makes the resource more accessible. Applying this to the semiconductor industry, JPMorgan's trading desk suggested that TurboQuant poses no immediate threat to memory consumption, arguing instead that enhanced AI hardware capabilities fostered by such efficiency improvements will ultimately drive greater demand for memory. Similarly, Ray Wang, an analyst at SemiAnalysis, stated that resolving bottlenecks like memory usage makes AI hardware more potent, leading to the development of more sophisticated models that will inevitably require more memory. Ben Barringer from Quilter Cheviot succinctly described TurboQuant as an "evolutionary, not revolutionary" advancement, implying it enhances existing trends rather than fundamentally disrupting them.

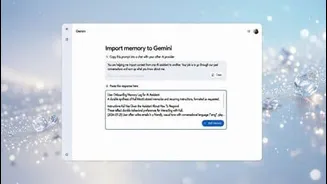

Real-World Adoption & Potential

Beyond the financial market fluctuations, TurboQuant offers immediate, tangible benefits for developers operating AI systems outside of large-scale data centers. Google has made this algorithm freely available, with no licensing fees or mandatory retraining requirements, allowing for seamless integration into existing AI models. Within a mere 24 hours of its public release, developers had already adapted it for use in popular local AI frameworks, including MLX for Apple Silicon-based devices. Demonstrating its effectiveness, a community benchmark successfully ran the Qwen3.5-35B model with context lengths extending up to 64,000 tokens, utilizing TurboQuant at a 2.5-bit precision. The benchmark reported flawless accuracy across all tests. This level of software efficiency is particularly significant for enterprises prioritizing data privacy and for developers pushing the boundaries of on-device artificial intelligence, as it quietly expands the capabilities of existing hardware infrastructure.