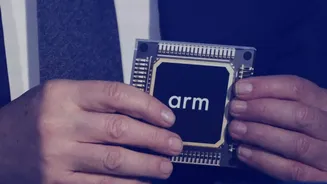

A New Chip Paradigm

Arm has stepped into a new domain with the introduction of its inaugural data center CPU, dubbed the Arm AGI CPU. This innovative processor is meticulously

crafted for Agentic AI, a sophisticated form of artificial intelligence capable of independent reasoning, planning, and action, moving beyond mere responses to individual commands. This development signifies a fundamental evolution for Arm, traditionally known for licensing its chip architecture to other manufacturers. For decades, Arm's designs have powered a vast array of devices, from everyday smartphones to robust server systems. However, the AGI CPU represents Arm's direct engagement in producing a chip, signaling a pivotal moment in its corporate journey. The CEO of Arm, Rene Haas, emphasized that Agentic computing is accelerating changes in how computing infrastructure is built and deployed, positioning this launch as the next significant phase for the Arm compute platform. This strategic move offers partners an alternative, allowing them to integrate Arm-designed silicon directly, rather than solely relying on licensing intellectual property or utilizing Arm's Compute Subsystems.

AGI CPU Performance Unpacked

The Arm AGI CPU boasts technical specifications tailored for the demands of Agentic AI. Each processor can accommodate up to 136 Arm Neoverse V3 cores, delivering exceptional performance on a per-core basis. It achieves an impressive memory bandwidth of 6GB/s per core with latency under 100 nanoseconds. Operating within a 300-watt thermal design power (TDP), the chip features a dedicated core for each program thread, ensuring consistent, high-level performance even under strenuous workloads without performance degradation or inefficient use of idle threads. In terms of physical integration, the AGI CPU supports high-density 1U server chassis for air-cooled setups, accommodating up to 8,160 cores per rack. For liquid-cooled configurations, this density can exceed 45,000 cores per rack. Arm asserts that, in comparison to x86 CPUs, the AGI CPU provides over double the performance per rack. This enhancement could potentially lead to substantial capital expenditure savings, estimated at up to $10 billion per gigawatt of AI data center capacity.

Meta's Leading Role

A cornerstone of this launch is the significant partnership with Meta, the parent company of platforms like Facebook, Instagram, and WhatsApp. Meta is not merely a client but has actively participated as a co-developer of the AGI CPU. Working in close collaboration with Arm, Meta helped shape the AGI CPU to enhance the efficiency of its extensive AI infrastructure. This new chip will complement Meta's proprietary custom silicon, the Meta Training and Inference Accelerator (MTIA), thereby facilitating more effective orchestration within large-scale AI systems. Santosh Janardhan, head of infrastructure at Meta, highlighted their collaboration with Arm to develop an efficient compute platform that substantially boosts their data center performance and density, underscoring the synergistic nature of their joint effort.

An Expansive Partner Network

Beyond Meta, a robust array of tech companies are committed to deploying the Arm AGI CPU. This includes prominent names such as Cerebras, Cloudflare, F5, OpenAI, Positron, Rebellions, SAP, and SK Telecom. On the manufacturing and system integration front, Arm has forged alliances with leading Original Equipment Manufacturers (OEMs) and Original Design Manufacturers (ODMs), including ASRock Rack, Lenovo, Quanta Computer, and Supermicro. The ecosystem supporting this platform's expansion into silicon now encompasses over 50 leading organizations across hyperscale computing, cloud services, silicon design, memory technology, networking solutions, software development, and manufacturing. This extensive network features industry giants like Amazon Web Services (AWS), Google Cloud, and Microsoft Azure. TSMC is instrumental in manufacturing the Arm AGI CPU utilizing its advanced 3nm process technology. Further reinforcing the platform's momentum are contributions from Broadcom, Marvell, Micron, Samsung, SK hynix, Hugging Face, Databricks, Oracle Cloud, Red Hat, Snowflake, Cisco, Arista, MediaTek, and GitHub, among many others. Nvidia's CEO, Jensen Huang, noted a nearly two-decade-long collaboration, emphasizing Arm's adaptability in enabling Nvidia to integrate Arm across all its platforms and for various AI stages, collectively creating a unified platform from cloud to edge to AI factories.