LONDON (AP) — European Union regulators are investigating Snapchat over concerns the platform isn't doing enough to protect kids and exposing them to risks such as increased vulnerability to child predators or recruitment by criminals.

The 27-nation EU’s executive Commission said Thursday it was opening a formal investigation into Snapchat under the bloc's sweeping rule book known as the Digital Services Act that's designed to protect internet users.

The European Commission said that Snapchat requires users to be at least 13 to use the platform but it suspected that the company's “age assurance” system is “insufficient” at keeping them off.

Regulators said the system is also not properly checking whether a user is under 17, which it needs to do in order to give them an “age appropriate” experience. They also worried that age checking systems aren’t preventing adults from posing as minors.

The commission suspects Snapchat isn't doing enough to protect minors from being contacted by “users with harmful intent, such as sexual exploitation or recruitment for criminal activities.”

Snapchat's systems also aren't good enough at preventing underage users from seeing information about illegal or restricted products like drugs, vapes or alcohol.

Snapchat “appears to have overlooked” the DSA’s “high safety standards for all users,” said Henna Virkkunen, the commission’s executive vice president for tech sovereignty, security and democracy.

The investigation will scrutinize Snapchat’s compliance with EU legislation, she said.

Snapchat has “fully cooperated” with the Commission by “engaging proactively, transparently and working in good faith to meet the DSA’s high safety standards - and we will continue to do so throughout this investigation,” the company said in a statement.

User safety and well-being is a “top priority” and the platform is designed with “privacy and safety built in from the start, including additional protection for teens,” it said.

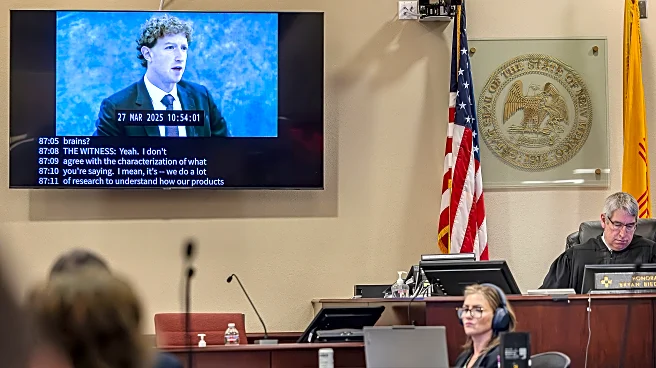

The probe adds to pressure that social media companies are facing on both sides of the Atlantic over the welfare of young people. On Wednesday, a California jury awarded millions of dollars in damages to a 20-year-old woman after deciding that Meta and YouTube designed their platforms to hook young users without concern for their well being.

A day earlier, a New Mexico jury handed a $375 million penalty to Meta after determining the company knowingly harmed children’s mental health and concealed what it knew about child sexual exploitation on its platforms.

Meanwhile, the EU accused TikTok earlier this year of breaching the DSA with “addictive design” features that lead to compulsive use by children, and has been investigating Facebook and Instagram since 2024 over child protection shortcomings.

Also Thursday, Brussels accused four of the world's biggest pornographic websites, Pornhub, Stripchat, XNXX and XVideos, of failing to protect children from adult content on their websites, following an investigation opened last year.

The Digital Services Act requires internet companies and online platforms to do more to protect European users from things like harmful content and suspect merchandise, or risk hefty fines worth up to 6% of annual revenue.

Pornhub, Stripchat, XNXX and XVideos did not respond immediately to requests for comment.

In preliminary findings, regulators said the site operators failed to “diligently identify and assess” risks to children and didn't use effective measures to stop them from accessing their services.

“Children are accessing adult content at increasingly younger ages and these platforms must put in place robust, privacy-preserving and effective measures to keep minors off their services,” Virkkunen said.

The porn sites now have chance to respond to the accusations before the commission issues a final decision.