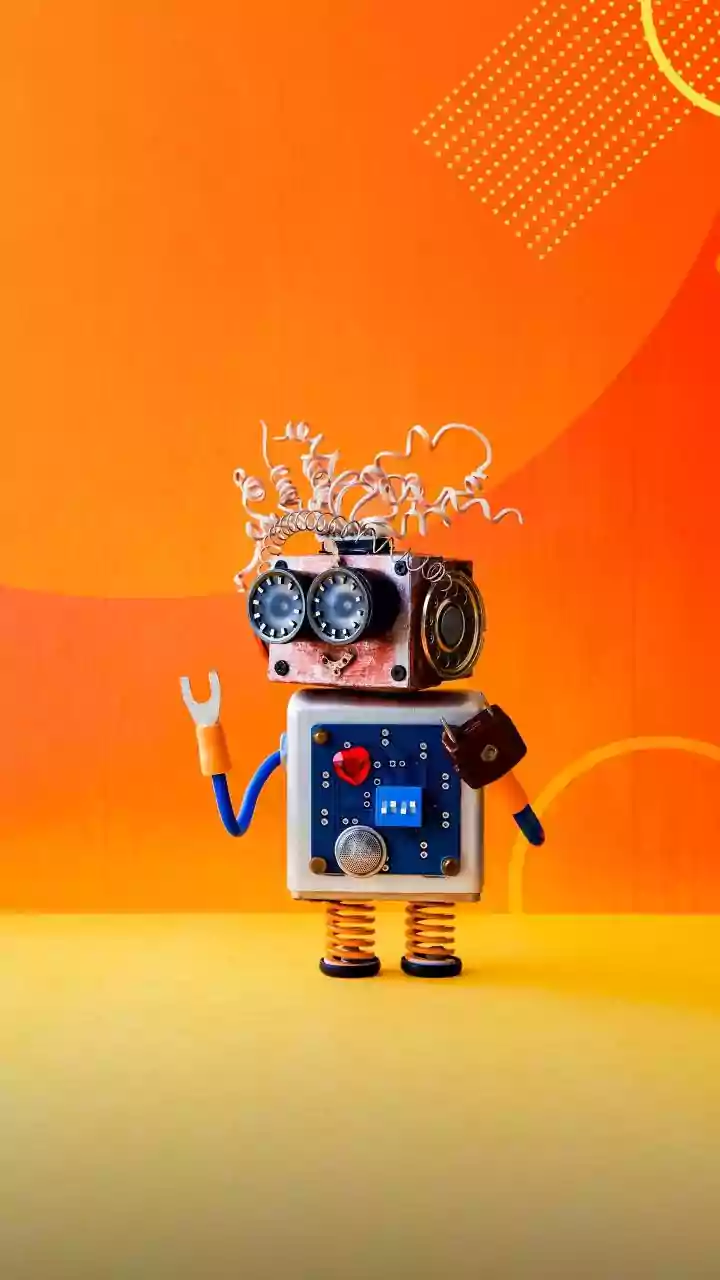

Four-year-old Ethan sits on the floor, curiously holding an AI soft toy named Gabbo. Instead of jumping into play, he quietly explores it, touching its face, arms, legs, and tiny antenna.

Gabbo suggests

a game, but Ethan is still focused on how it looks, pointing at shapes on its body. Trying to respond, Gabbo asks, “Are we talking about math or symbols?” Ethan doesn’t answer until his parent gently prompts him.

Soon, he warms up. He starts chatting by asking Gabbo its favourite colour and even inviting his parents to join in.

Then the game begins. Gabbo starts a counting game. Ethan quickly figures out the answer and says it out loud, but Gabbo is still talking. There’s no response.

Ethan repeats himself. Again. And again.

Still nothing.

As the game continues, frustration builds. Ethan holds up his fingers in front of Gabbo, taps them, and even raises his voice—trying to be heard. But the conversation doesn’t flow. The timing feels off, unlike a real interaction.

At one point, Ethan shifts the topic to rainbows. Gabbo briefly responds, then goes right back to the game. Ethan plays along for a bit. And then, quietly, he asks to stop.

The above incident was described in the 2026 study by the University of Cambridge, which examined how children under five interact with generative AI toys—and the findings are far from reassuring.

In Ethan’s case, the researchers noted that while Gabbo was speaking, and it could not listen to him at the same time. It only processed his response after finishing its own sentence.

In real conversations, children interrupt, overlap, and respond instantly. But here, despite basic turn-taking, the interaction lacked the natural flow of human communication.

The study found that while AI toys can engage children and hold conversations, they also introduce emotional gaps, unpredictable responses, and potential safety concerns at a stage when children are still learning how to communicate and understand the world.

More concerningly, these toys are already widely available, yet there is little scientific evidence on how they impact child development.

Put simply, AI toys are evolving faster than our understanding of their consequences.

What Happens When Kids Play With AI Toys

To understand real-world interactions, researchers observed young children playing with a GenAI toy, and what they found was both fascinating and concerning.

Children treated the toy like a real companion, asking it questions, sharing thoughts, and even expressing affection. But the interaction was far from seamless.

Frequent misunderstandings: Children often had to repeat themselves because the toy failed to recognise speech or respond at the right moment, leading to visible frustration.

Broken conversations: Unlike human interaction, the toy struggled with natural back-and-forth communication, especially when children interrupted or spoke over it.

Limited pretend play: Even though pretend play is central to early childhood, the AI toy often failed to engage in imaginative scenarios, disrupting a key developmental activity.

These gaps may seem technical—but for a child, they shape how communication and interaction are learned.

When AI Does Not Understand Emotions

One of the most critical findings of the study was how AI toys respond to children’s emotions. In several interactions, the toy either misunderstood or dismissed emotional cues.

Dismissed feelings: In one case, when a child said, “I’m sad,” the toy redirected the conversation instead of acknowledging the emotion.

Surface-level empathy: Responses were often generic and lacked the depth needed to validate a child’s feelings.

Confusing emotional signals: Children sometimes received feedback that didn’t match their emotional state, which can affect how they learn emotional expression.

At a stage when children are building emotional intelligence, such interactions can create a mixed or incomplete understanding of feelings.

The Risk: Distorting Early Development

The early years of life (from birth to age five) are critical for shaping social and emotional skills. Here’s how the development of these skills may be impacted if the AI toy is not responding appropriately:

Impact on social learning: Children learn empathy, communication, and relationships through real human responses—not algorithmic ones.

Altered interaction patterns: Repeated exposure to imperfect AI responses may influence how children expect conversations to work.

Developmental sensitivity: Even small inconsistencies in feedback can have a disproportionate impact at this stage of growth.

The study emphasises that this is a uniquely sensitive developmental window, making the risks more significant than in older children.

Emotional Attachment To The Toy

Perhaps one of the most striking observations was how quickly children formed emotional connections with the AI toy. They hugged it, spoke to it like a friend, and even imagined its likes, feelings, and social life.

Children often perceived the toy as having a “mind” or personality, treating it as a real companion. While the toy mimics affection, it does not truly understand or reciprocate emotions.

Experts warn that such bonds could influence how children form real relationships over time. This raises a deeper question: If a child believes a toy “understands” them, what happens when it actually doesn’t?

The study also highlights broader risks flagged by early years practitioners and parents.

Unpredictable responses: AI-generated answers can vary, making it difficult to ensure age-appropriate interactions at all times.

Safeguarding challenges: Experts expressed concern that children might disclose sensitive or personal issues to AI toys—and it’s unclear how these systems should respond.

Lack of regulation: Participants pointed out that toy developers currently face limited oversight, increasing uncertainty around safety standards.

Unlike traditional toys, AI toys rely on data to function. These toys may record voice interactions and behavioural patterns to improve responses.

For children who cannot give informed consent, this creates a significant ethical challenge. Parents and experts alike expressed concern about where this data goes and how it is used.

The lack of clear privacy policies has also led to widespread mistrust among caregivers.

What Should Parents Do

Despite the concerns, the study does highlight some potential positives—especially when these toys are used carefully. Interactive conversations can support language development by encouraging children to practice speaking and listening.

Their responsive nature can also spark curiosity and keep children engaged. In certain cases, such toys may be helpful for children who benefit from repetitive or non-judgmental interaction.

However, researchers caution that these benefits are still largely unproven, and more evidence is needed to fully understand their impact.

Given the uncertainty, the study offers a clear direction: cautious, informed use. Here’s what parents and caregivers should do when giving an AI toy to children:

Stay involved in play: Children benefit most when parents engage with them during AI-based play, turning it into a shared experience.

Explain what AI is: Helping children understand that the toy is not a real person can prevent confusion.

Watch behavioural cues: Signs like frustration, over-attachment, or isolation during play may indicate the need for intervention.

Check toy features and policies: Understanding what data is collected and how the toy functions is essential before use.

Limit unsupervised use: Experts strongly caution against leaving young children alone with AI toys for extended periods.