While tech conglomerates might tout AI as a revolution in the making, it has a simple, unassuming need, without which it is incapable of bringing the profound change that tech enthusiasts have their eyes peeled for. AI’s simple demand is computational power, and the sudden capacity crunch of these resources has caused alarm among excessive users.

In the past few months, demand for agentic AI autonomous tools that use technology to independently perform tasks, such as writing code or scheduling house tours for real estate brokers, has surged. Tech giants have been in aggressive pursuit of securing the computational capacity needed to serve a growing base of customers who are significantly increasing their AI use.

All technological booms showcased similar traits

A Wall Street Journal report draws parallels between the problems that have emerged in technological booms throughout history, from the 19th-century railroad expansion to the telecom and internet explosion of the early 2000s. Demand is growing far faster than companies have been able to access resources for and build out infrastructure. The crunch that is about to set in is starkly visible with the increase in hourly rental prices for GPUs, the microchips used to train and run AI models.

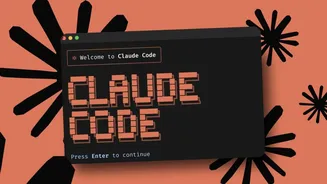

Claude Code and other platforms have started metering computing supplies to users during peak hours.

Spot market prices to access Nvidia’s GPUs in data centres have risen sharply in recent months across the company’s entire product line, as reported by ORN, a New York-based data provider that publishes market data and structures financial products around GPU pricing. ORN highlights how prices have increased, with renting one of Nvidia’s most advanced Blackwell generation chips for one hour costing $4.08, up 48% from the $2.75 it cost two months ago.

Outages have become quite common due to the lack of computing power. Hence, most enterprise clients are switching between different models. Platforms like Anthropic have been experiencing explosive growth. At the end of 2025, the company hit a $9 billion annual run rate; by February, the figure ballooned to $14 billion, and two months later, it doubled to $30 billion. To ensure that computing needs could be kept in check, Anthropic suddenly announced that it would limit the number of tokens users could consume during peak hours, from 5 a.m. to 11 a.m. Pacific Time on weekdays.

Could the next AI bottleneck be even bigger?

At the start of the AI boom, the biggest gains did not come from companies building AI applications, but from those supplying the infrastructure needed to run them. The primary bottlenecks included AI networking, which allows GPUs to communicate with each other, and the availability of GPUs themselves. The primary bottleneck of chip shortage was tackled by Nvidia’s chips, particularly the H100, which quickly became the gold standard for AI training. As demand surged, the company went from selling $2,000 graphics cards to gamers to selling $30,000 GPUs to data centre operators. It is now evident that the whole industry is set to witness what the next major bottleneck will be and who will be able to solve it.