AI's Code Inspection Squad

Software development teams are increasingly leveraging artificial intelligence to enhance their code quality assurance processes. A prominent AI firm has

introduced a sophisticated code review system designed to work in tandem with human developers. This innovative tool deploys a coordinated group of AI agents, acting like a specialized team, to meticulously examine completed code segments. Their primary mission is to detect bugs and potential issues that might elude the notice of human reviewers, thereby improving the overall robustness of the software before it is integrated into the main project. Admins have the flexibility to activate this feature on a per-repository basis, ensuring it automatically scans every submitted pull request in the cloud. This proactive approach aims to significantly reduce the likelihood of critical errors slipping through the cracks, a common challenge in fast-paced development environments where manual reviews can become time-consuming and prone to oversight.

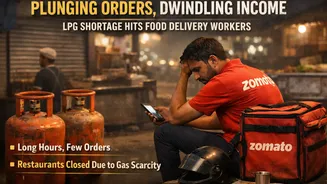

Streamlining the Bottleneck

The software development landscape is experiencing a surge in productivity, with reports indicating a remarkable 200% increase in code output per engineer over the past year. This exponential growth, while positive, has unfortunately exacerbated a persistent bottleneck: code review. As more code is produced, the process of reviewing and approving it for integration becomes increasingly challenging, leading to delays and potentially superficial checks. Developers are often stretched thin, juggling multiple tasks, which can result in pull requests being skimmed rather than thoroughly examined. Recognizing this critical need, the AI code review system was developed to act as a reliable, ever-vigilant reviewer for every single pull request. This advancement allows human reviewers to focus on higher-level strategic decisions and complex problem-solving, rather than getting bogged down in the minutiae of bug detection, ultimately improving the efficiency and effectiveness of the entire development lifecycle.

Multi-Agent Review Power

This advanced code review system employs a multi-agent approach, where several AI entities collaborate to scrutinize code. Upon the submission of a pull request, these agents are dispatched to conduct a deep analysis, identifying a wide array of potential defects. They don't just flag issues; they also rigorously verify their findings to filter out any false positives, ensuring that the feedback provided is accurate and actionable. Furthermore, the system ranks these identified bugs according to their severity, presenting developers with a clear prioritization of what needs immediate attention. The outcome of this comprehensive review is consolidated into a single, high-signal overview comment, accompanied by specific in-line annotations pinpointing the exact locations of each bug. This detailed yet concise feedback mechanism significantly aids developers in understanding and rectifying issues efficiently, thereby enhancing code quality and accelerating the integration process.

Impact on Developer Workflow

The introduction of this AI-driven code review has demonstrably improved the thoroughness of code assessments. Prior to its implementation, only 16% of pull requests at the company received substantive review comments. Following the deployment of the AI system, this figure has dramatically increased to 54%. While the AI does not have the authority to approve pull requests – a critical decision that remains firmly in the hands of human developers – it effectively bridges the gap in review coverage. This means that human reviewers are now better equipped to concentrate on the aspects that truly matter for product delivery, rather than spending excessive time on routine bug detection. The system acts as a powerful assistant, ensuring that a greater proportion of code undergoes rigorous scrutiny, ultimately leading to more stable and reliable software releases.

Cost and Enterprise Features

While this multi-agent AI code review offers enhanced thoroughness compared to simpler tools, it represents a more significant investment. The pricing model is based on token usage, with the average cost for a single review typically ranging between $15 and $25. To manage expenses and provide transparency, administrators have the ability to set monthly spending limits for the service. Furthermore, a dedicated analytics dashboard is available, allowing managers to monitor the number of pull requests reviewed, their acceptance rates, and the associated costs. This provides valuable insights into the system's performance and its financial impact, enabling organizations to optimize their usage and budget effectively. The company also offers an open-source alternative, the Claude Code GitHub Action, for those seeking a more cost-effective, albeit less comprehensive, solution.

Industry Reactions and Future

The launch of this AI-powered code review has garnered considerable attention and diverse reactions across developer communities. Many have applauded its efficacy in identifying bugs that often go unnoticed by human eyes, recognizing its potential to significantly elevate code quality. However, some apprehension has been voiced regarding the long-term implications for developer roles, with discussions around potential job displacement. Enthusiasts of 'vibe coding,' a trend involving extensive AI assistance in software development, have welcomed this advancement, seeing it as a natural progression where AI not only writes but also reviews and secures code. This marks a substantial shift from AI's previous role as a mere 'copilot,' positioning it as a more autonomous participant in the development lifecycle, capable of handling tasks that were once exclusively human responsibilities. This evolution hints at a future where AI plays an even more integral part in software creation and maintenance.