The Shifting AI Landscape

For years, Nvidia reigned supreme in the AI hardware arena, primarily due to its 'one chip for every task' philosophy, bolstered by the ubiquitous CUDA

software ecosystem. This strategy propelled the company to unprecedented heights, commanding over 90% of the AI accelerator market with impressive gross margins. However, the burgeoning importance and cost-sensitivity of AI inference—the process of running AI models—are prompting major technology players to seek more specialized and economical solutions. This fundamental change is creating significant pressure on Nvidia's established model, forcing a re-evaluation of its long-held approach. The market is witnessing a discernible trend where customers are actively exploring alternatives, leading to substantial shifts in market valuation and a clear signal that the era of a single, all-encompassing chip may be drawing to a close.

Giants Build Custom Chips

The landscape of AI acceleration is rapidly evolving, with tech behemoths like Google, Microsoft, Amazon, and Meta aggressively developing their own proprietary AI chips. These custom-designed processors are not just alternatives; they are being explicitly benchmarked against Nvidia's offerings and are positioned as significantly more cost-effective for large-scale deployment. Google's Ironwood TPU, for instance, reportedly offers a substantial reduction in total cost of ownership, estimated to be between 30% and 44% lower than comparable Nvidia GB200 Blackwell servers. Similarly, Microsoft's latest Maia 200 chip, built using advanced 3nm TSMC technology, claims a 30% improvement in performance per dollar over its predecessor and is specifically designed to outperform Nvidia's seventh-generation TPUs in FP8 tasks. Meta has also entered the fray, unveiling four new in-house MTIA chips with a plan to release a new generation approximately every six months, underscoring a commitment to specialized AI hardware development.

Market Reacts to Change

The financial markets are already reflecting the growing competitive pressure on Nvidia. A notable instance occurred when reports surfaced indicating that Meta, a key Nvidia client with substantial AI infrastructure spending plans, was considering Google's TPUs for its data centers. This news alone caused Nvidia's stock to plummet by over 6% in a single trading session, wiping out approximately $250 billion in market capitalization, while Alphabet's stock saw a corresponding rise. Nvidia's response to this shift has been notably defensive, emphasizing its position as the leading platform capable of running all AI models across diverse computing environments. While this statement holds technical truth, the market's current focus is increasingly shifting from the breadth of capability to the efficiency and cost-effectiveness of running specific AI workloads, particularly inference tasks, which are projected to dominate AI data center spending in the coming years.

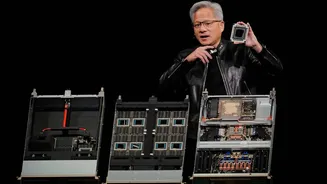

The Future of AI Hardware

The emergence of purpose-built AI chips is reshaping the industry, with innovations like Groq's LPU—now integrated into Nvidia's product pipeline—highlighting a departure from the reliance on high-bandwidth memory (HBM). Groq's LPU utilizes SRAM, a more readily available memory type that sidesteps the supply constraints currently impacting HBM production by companies like SK Hynix and Micron. While the AI hardware market is expanding rapidly, creating opportunities for multiple players, Nvidia's historically dominant pricing power is undeniably under threat. Jensen Huang's recent acknowledgement of the need for dedicated inference hardware signals a significant strategic pivot, validating the long-held arguments of competitors and marking the end of an era where a single, versatile chip defined the market.