AGI: A Shifting Horizon

Artificial General Intelligence, a long-envisioned leap in machine capability, has always been presented as the ultimate AI goal, promising systems that

can think, reason, and adapt like humans across diverse tasks. For years, it seemed like a distant endgame for AI pioneers. However, a growing sentiment within the tech industry now questions whether this monumental achievement is still a future milestone or something that has subtly emerged. The core of this discussion often boils down to semantics – how we choose to define intelligence itself dictates whether we believe AGI has already arrived.

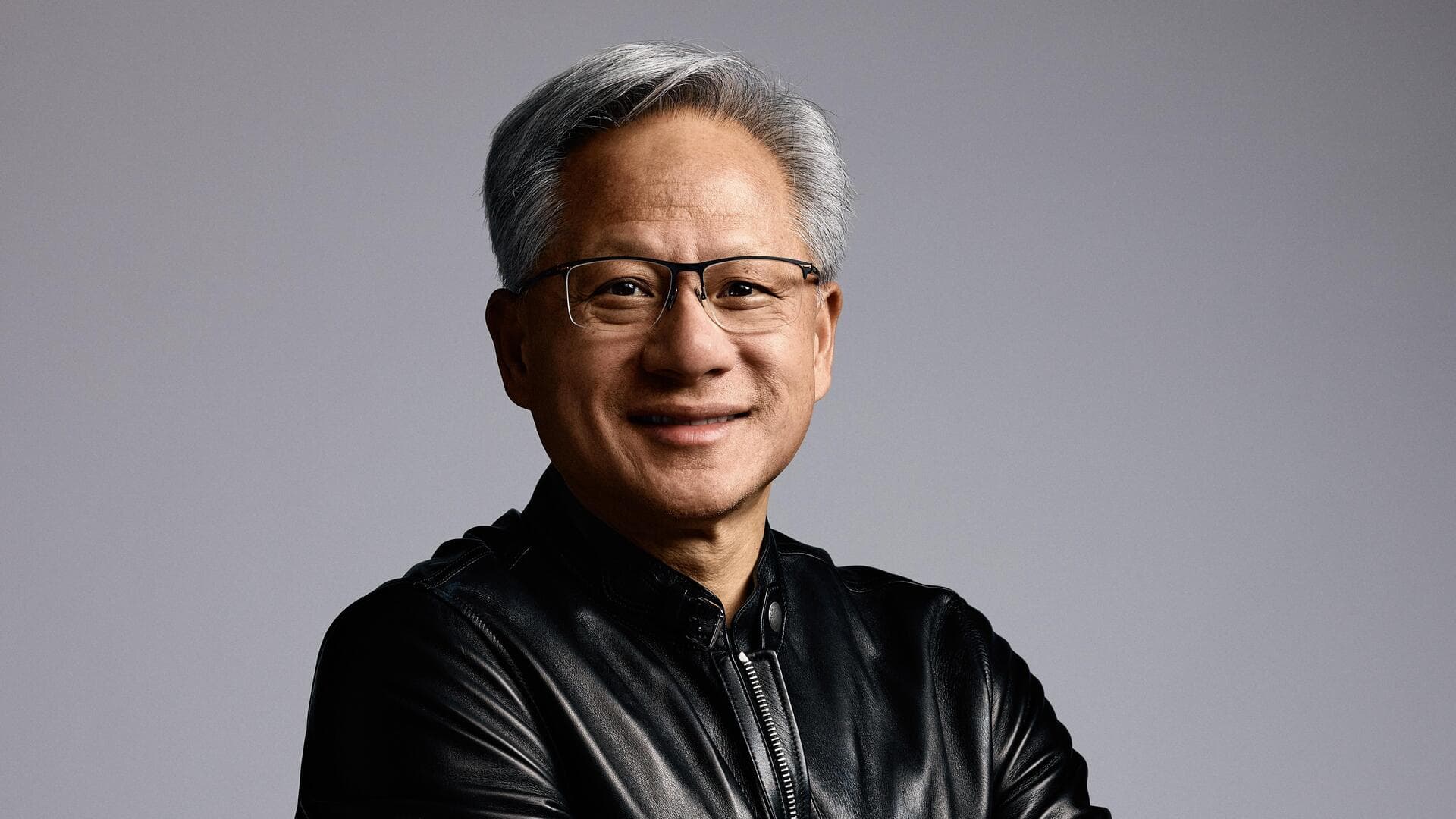

Huang's Provocative Definition

Nvidia's CEO, Jensen Huang, injected a new perspective into the AGI discourse. During a podcast discussion, he considered a specific definition of AGI: an AI capable of successfully launching and managing a company valued at a billion dollars. Huang's response was direct: by this measure, AGI is not a future aspiration but a current phenomenon. This viewpoint, however, leans on a more pragmatic and perhaps limited interpretation of general intelligence, rather than the more expansive concept of machines fully mimicking human cognitive functions. He cited emerging AI agent systems, like OpenClaw, as examples that could, in theory, autonomously develop and scale applications, rapidly attracting vast user bases and generating substantial revenue, reminiscent of the early internet's explosive growth, albeit with its inherent instability.

The Contentious AGI Landscape

Huang's perspective is far from universally accepted within the AI community. The definition of AGI remains a hotly debated topic, and consequently, so does the projected timeline for its realization. Experts like Demis Hassabis express a more conservative outlook, highlighting that current AI systems still lack crucial capabilities in areas such as long-term strategic planning, consistent reasoning, and continuous, self-directed learning. He posits that true general intelligence is still several years away. On the opposite end of the spectrum, figures like Elon Musk have suggested a much earlier arrival, potentially within the next few years. Even within his own arguments, Huang distinguishes between AI's ability to generate short-term economic value and its capacity to build and sustain complex, enduring organizations, suggesting that replicating the strategic depth and innovation cycles of a company like Nvidia is currently beyond AI's reach.

Agentic AI: Blurring Lines

While the debate over AGI's precise definition and arrival continues, tangible advancements in AI capabilities are increasingly evident through 'agentic' features. Systems like Anthropic's Claude models exemplify this progress. These AI tools are designed to go beyond simple prompt responses, capable of executing multi-step tasks, engaging in complex problem-solving, and supporting workflows that traditionally required significant human involvement. From writing and debugging intricate code to generating comprehensive analytical reports, Claude represents a broader trend towards AI systems exhibiting a greater degree of autonomy. While these capabilities may not fully align with the classical definition of AGI, they are steadily eroding the distinctions between specialized AI and more versatile forms of intelligence. As these agentic systems grow more sophisticated, the conversation is shifting from *if* AGI has arrived to whether our very definition of it is adapting in real time.