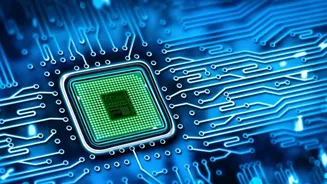

CPU Powering AI

Google is significantly deepening its long-standing relationship with Intel, a collaboration that spans nearly three decades. This renewed commitment involves

integrating multiple future generations of Intel's Central Processing Units (CPUs) into Google's expansive Artificial Intelligence data centers. Specifically, the latest Intel Xeon 6 CPUs are set to handle the demanding tasks of AI training and inference. This move is noteworthy as it positions Intel to contend more strongly in the AI chip market, an arena currently dominated by other players. The expansion of this partnership arrives at a critical juncture, as AI data centers are becoming the central battleground for the next wave of AI advancements. Intel's production of these cutting-edge Xeon processors utilizes its advanced 18A manufacturing technology at its Arizona facility, a plant that began operations last year. This strategic enhancement of computing power is key to meeting the escalating demands of sophisticated AI applications and research.

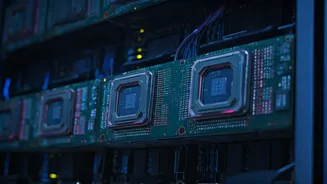

Collaborative IPU Development

Beyond the integration of powerful CPUs, Google and Intel are also reinforcing their joint efforts on a distinct type of chip: the Infrastructure Processing Unit, or IPU. This collaborative initiative between the two tech giants has been ongoing since 2022. The shared vision is that scaling AI infrastructure effectively requires more than just specialized accelerators; it necessitates balanced systems that integrate general-purpose computing with tailored acceleration. Intel's leadership emphasizes that both CPUs and IPUs are fundamental components for achieving the high performance, operational efficiency, and flexibility that contemporary AI workloads demand. This dual-pronged approach, focusing on both core processing and specialized infrastructure management, aims to create a more robust and adaptable foundation for the ever-growing needs of AI development and deployment.

Future-Proofing AI Infrastructure

This strengthened alliance between Google and Intel signifies a shared dedication to advancing an open and scalable infrastructure framework essential for the AI era. By harmonizing general-purpose computing capabilities with purpose-built infrastructure acceleration through IPUs, the collaboration seeks to build the bedrock for the subsequent generation of AI-driven cloud services. This strategic alignment is geared towards fostering innovation across a wide spectrum of enterprises, ensuring they have the computational power and efficiency to leverage AI effectively. The partnership underscores the belief that a comprehensive system approach, encompassing both powerful CPUs for core tasks and intelligent IPUs for managing infrastructure overhead, is crucial for sustained AI progress and for meeting the intensifying performance and efficiency requirements of complex AI workloads.