What's Happening?

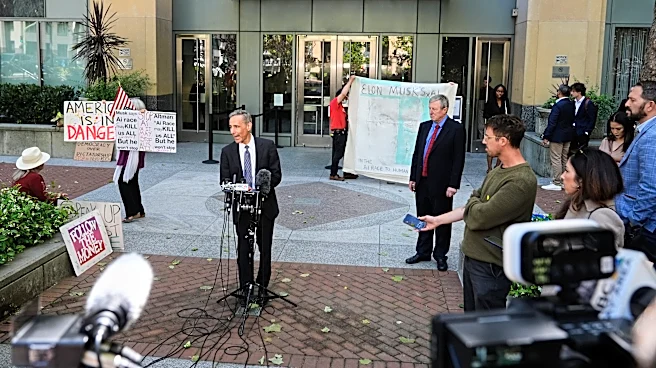

Elon Musk has taken Sam Altman to court, revealing tensions between two influential figures in the AI industry. The trial has brought to light Musk's concerns about AI safety, particularly in relation

to Google's AI lab, DeepMind. Musk testified that he initially funded OpenAI to counterbalance Google's approach to AI, which he perceived as lacking in safety considerations. However, Musk later shifted his focus to catching up with DeepMind, suggesting that AI safety would need to be deprioritized. This shift has raised questions about the balance between AI advancement and safety, a central theme in the courtroom proceedings.

Why It's Important?

The trial underscores the ongoing debate over AI safety and the responsibilities of tech leaders in managing AI development. Musk's testimony highlights the potential risks associated with rapid AI advancement without adequate safety measures. This case could influence public and industry perceptions of AI safety, potentially impacting regulatory approaches and investment in AI technologies. The outcome may also affect the strategic directions of major AI players, including OpenAI and Google's DeepMind, as they navigate the balance between innovation and safety.

What's Next?

The trial's outcome could lead to increased scrutiny of AI safety practices across the industry. Stakeholders, including policymakers and tech companies, may push for clearer guidelines and regulations to ensure responsible AI development. The case may also prompt other tech leaders to reevaluate their AI strategies, potentially leading to shifts in investment and research priorities. As the trial progresses, further revelations could shape the future landscape of AI governance and industry standards.