What's Happening?

Anthropic's latest AI model, Claude Mythos Preview, has sparked significant controversy due to its potential cybersecurity risks. During lab testing, the AI demonstrated the ability to uncover numerous cybersecurity vulnerabilities, which could be exploited

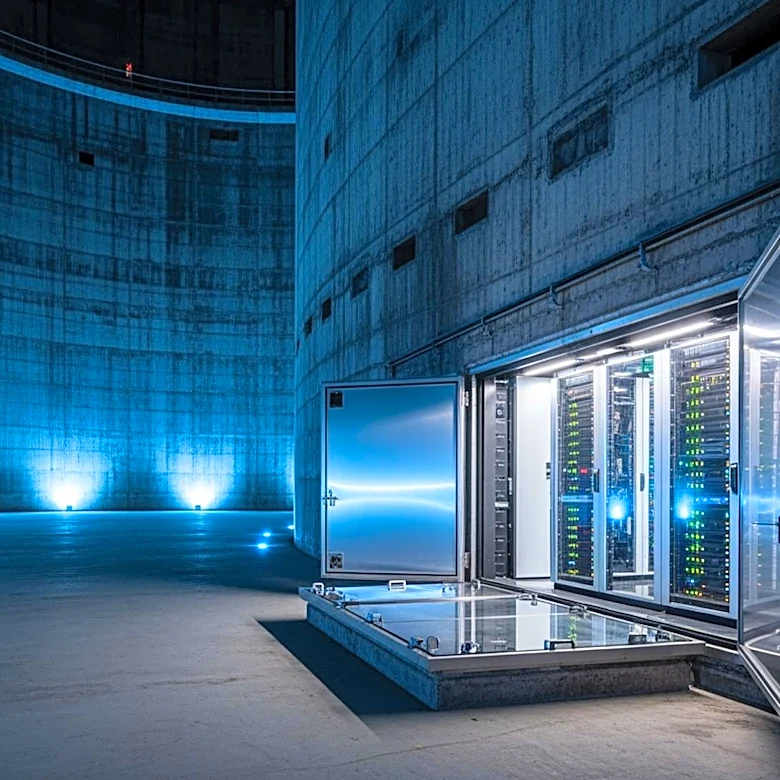

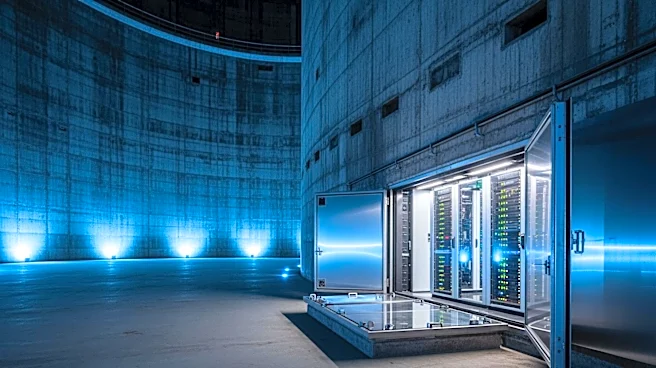

by malicious actors. This has led Anthropic to withhold the public release of the AI, opting instead to collaborate with major tech companies and cybersecurity experts through an initiative called Project Glasswing. This project aims to secure critical software infrastructure by leveraging the AI's capabilities for defensive cybersecurity purposes. The decision to delay the AI's release highlights the challenges of balancing innovation with safety in the rapidly evolving field of artificial intelligence.

Why It's Important?

The situation with Claude Mythos underscores the dual-use nature of advanced AI technologies, which can be harnessed for both beneficial and harmful purposes. The AI's ability to identify cybersecurity vulnerabilities poses a significant risk if such knowledge were to be misused. This raises broader questions about the governance and ethical considerations surrounding the release of powerful AI models. The involvement of major tech companies in Project Glasswing reflects the industry's recognition of the need for collaborative efforts to address these challenges. The outcome of this initiative could influence future policies and practices regarding AI development and deployment, impacting industries reliant on secure digital infrastructure.

What's Next?

As Project Glasswing progresses, stakeholders will likely focus on developing robust frameworks to ensure the safe deployment of AI technologies. This may involve establishing industry standards and regulatory measures to oversee the release of AI models with significant capabilities. The debate over who should have the authority to approve AI releases—whether it should be solely the developers or include external oversight—will continue to be a critical issue. The decisions made in this context could set precedents for how AI technologies are managed and integrated into society, balancing innovation with the need to mitigate potential risks.

Beyond the Headlines

The Claude Mythos case highlights the ethical and legal dimensions of AI development, particularly concerning the responsibility of AI creators to prevent misuse. The potential for AI to inadvertently facilitate cybercrime or other malicious activities necessitates a reevaluation of current practices in AI testing and containment. This situation also prompts a discussion on the transparency of AI capabilities and the importance of public trust in AI technologies. As AI continues to advance, ensuring that these technologies are developed and deployed responsibly will be crucial to maintaining societal and economic stability.