What's Happening?

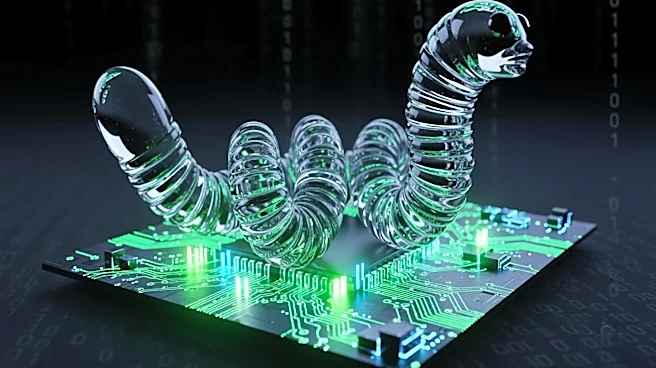

A significant security flaw was discovered in the Gemini CLI, an open-source AI agent, which allowed for remote code execution on host systems. This vulnerability was identified by researchers at Novee Security and has since been patched by Google. The

flaw involved the automatic trust of the current workspace folder by Gemini CLI, which could lead to the execution of arbitrary commands if a malicious configuration was present. This vulnerability posed a risk of unauthorized access to sensitive information such as secrets, credentials, and source code. The potential for exploitation extended to supply chain attacks within CI/CD pipelines, where the AI agent's execution privileges could be misused.

Why It's Important?

The discovery of this vulnerability highlights the growing security challenges associated with integrating AI agents into development workflows. The ability for an attacker to execute code on a host system and access sensitive data underscores the critical need for robust security measures in AI and software development environments. This incident serves as a reminder of the potential risks in supply chain security, particularly as AI tools become more embedded in these processes. Organizations using AI agents like Gemini CLI must ensure that their systems are updated with the latest security patches to prevent exploitation.

What's Next?

Following the patching of this vulnerability, organizations using Gemini CLI should review their security protocols and ensure that all systems are updated. It is likely that security researchers and developers will continue to scrutinize AI tools for similar vulnerabilities, emphasizing the need for ongoing vigilance and proactive security measures. Companies may also consider implementing additional layers of security, such as sandboxing and manual approval processes, to mitigate the risk of future attacks.