What's Happening?

Meta has announced plans to use data from its employees' keystrokes and mouse movements to train its AI models. This initiative aims to provide real-world examples of how people interact with computers, enhancing the capability and efficiency of Meta's

AI systems. The company has implemented safeguards to protect sensitive content, ensuring that the data is used solely for training purposes. This move highlights the lengths to which tech companies are going to source training data, which is essential for developing effective AI models.

Why It's Important?

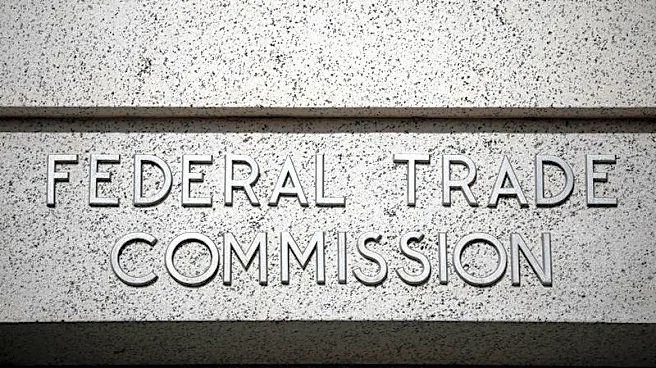

The use of employee data for AI training raises significant privacy concerns, as it involves monitoring personal interactions with technology. While Meta assures that safeguards are in place, the practice underscores the broader ethical and privacy challenges associated with AI development. As companies seek new data sources to improve AI capabilities, they must balance innovation with the protection of individual privacy rights. This development could prompt discussions on the ethical use of data in AI training and the need for clear guidelines and regulations.

What's Next?

Meta's approach may lead to increased scrutiny from privacy advocates and regulatory bodies, potentially influencing future policies on data usage in AI training. Other tech companies might adopt similar strategies, further intensifying the debate over privacy and data protection. As AI technology continues to advance, the industry will need to address these ethical concerns to maintain public trust and ensure responsible AI development. Ongoing dialogue between tech companies, regulators, and the public will be crucial in shaping the future of AI and data privacy.