What's Happening?

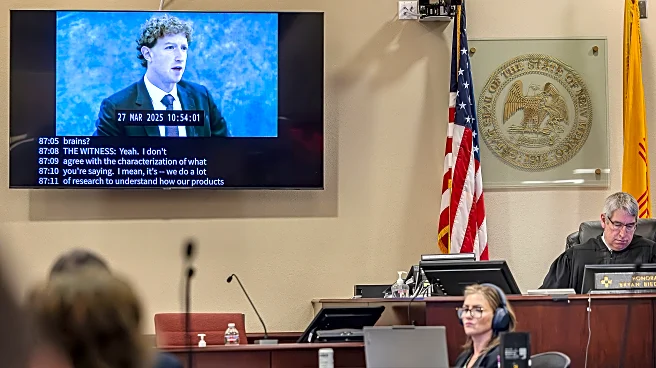

A jury in New Mexico has found Meta, the parent company of Facebook, Instagram, and WhatsApp, guilty of harming children's mental health through its social media platforms. The jury imposed a $375 million penalty on Meta, marking a significant legal decision

in a series of trials focused on social media child safety. The case, led by New Mexico Attorney General Raúl Torrez, accused Meta of prioritizing profits over the safety of young users, failing to protect them from harmful content and sexual predators. The jury determined that Meta violated state consumer protection laws by making misleading statements and engaging in unfair trade practices. Meta has announced plans to appeal the verdict, maintaining that it works diligently to ensure user safety on its platforms.

Why It's Important?

This verdict represents a pivotal moment in the ongoing scrutiny of social media companies regarding their impact on children's mental health. The decision could set a precedent for future cases, potentially leading to more stringent regulations and accountability measures for tech companies. The outcome challenges the protections afforded to these companies under Section 230 of the Communications Decency Act, which shields them from liability for user-generated content. If upheld, the ruling could compel social media platforms to implement more robust safety measures, affecting their user engagement strategies and advertising revenue. The case underscores the growing demand for tech companies to address the mental health implications of their platforms, particularly for vulnerable young users.

What's Next?

As Meta prepares to appeal the decision, the case is likely to influence other ongoing lawsuits against social media companies. Trials involving similar allegations are scheduled, including a significant case in Los Angeles focusing on platform addiction. These legal battles could take years to resolve, with potential implications for tech industry regulations and business practices. The outcomes may prompt legislative action to enhance child safety online, reflecting increasing public and governmental pressure on tech companies to prioritize user well-being over profit.