What's Happening?

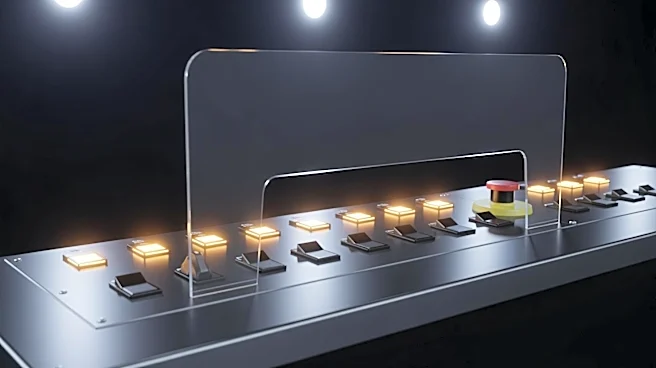

The emergence of agentic AI systems, which can autonomously interpret and execute human goals, is prompting discussions about the need for robust internal controls. These systems possess extraordinary capabilities but also introduce new risks, similar

to those managed by financial information systems (FIS) through internal controls. These controls are essential for maintaining trust, stability, and accountability, preventing fraud, safeguarding assets, and restricting access to sensitive information. The article emphasizes that agentic AI must operate within human-defined boundaries, such as time, scope, resource limits, and risk thresholds, to prevent unintended escalation or runaway processes. The concept of 'human in the loop' is crucial, ensuring that AI systems pause for human approval in high-impact or irreversible actions, thus preserving human authority over critical decisions.

Why It's Important?

The implementation of internal controls for agentic AI is vital to ensure these systems remain tools that operate within human-defined boundaries, rather than becoming autonomous entities that could act independently of human intent. This approach is crucial for maintaining safety, trust, and accountability in AI systems, similar to the role internal controls play in financial systems. As AI systems become more capable, the risk of them operating without oversight increases, potentially leading to unintended consequences. By adopting a disciplined approach to AI governance, organizations can ensure responsible and sustainable AI deployment, aligning AI actions with human values and organizational goals.

What's Next?

Organizations are expected to adopt internal controls similar to those used in financial systems to govern agentic AI. This includes defining what AI systems can access and ensuring segregation of duties to prevent closed-loop autonomy. As AI systems continue to evolve, the need for policies, ethical constraints, and safety layers will become increasingly important to ensure AI systems act in alignment with human values. The focus will be on maintaining the 'human in the loop' to preserve human authority over critical decisions, ensuring AI systems act for humans, not instead of them.

Beyond the Headlines

The discussion around agentic AI highlights broader ethical and legal implications, particularly concerning autonomy and accountability. Ensuring AI systems remain within human control is not just a technical challenge but also a cultural and ethical one, requiring organizations to rethink their approach to AI governance. The potential for AI systems to operate independently raises questions about the future of work, decision-making, and the role of humans in increasingly automated environments. These considerations will shape the development and deployment of AI technologies, influencing public policy and societal norms.