What's Happening?

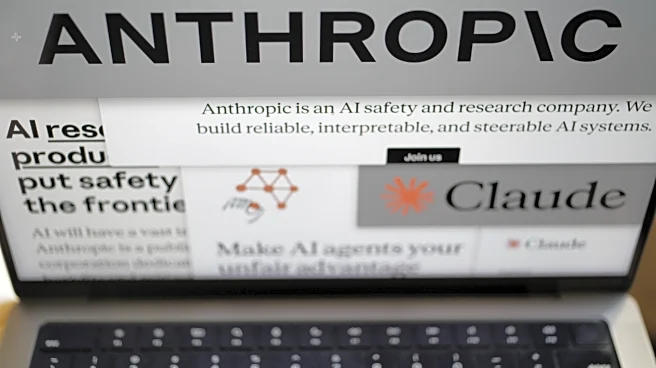

A federal judge in San Francisco has expressed concerns over the U.S. government's ban on the AI company Anthropic, suggesting it appears punitive. The ban followed Anthropic's public disagreement with the Pentagon regarding the use of its AI model, Claude,

for military purposes. The company has filed lawsuits claiming the ban is retaliatory and violates its First Amendment rights. The judge is considering whether to temporarily halt the ban while the case is reviewed, highlighting the tension between government regulation and corporate autonomy in the tech sector.

Why It's Important?

This case underscores the complex relationship between technology companies and government agencies, particularly concerning national security and ethical AI use. The outcome could set a precedent for how the government interacts with tech companies that challenge its policies. It raises questions about the balance between national security and free speech, and the extent to which the government can influence corporate decisions. The case also highlights the growing importance of AI ethics and the potential consequences for companies that prioritize ethical considerations over government demands.

What's Next?

The judge is expected to make a ruling soon on whether to pause the government's ban. This decision could impact Anthropic's business operations and its relationships with other companies. If the ban is lifted, it may encourage other tech companies to take a stand on ethical issues without fear of government retaliation. Conversely, if the ban is upheld, it could deter companies from publicly opposing government policies. The case will likely continue to draw attention from both the tech industry and legal experts as it progresses.