What's Happening?

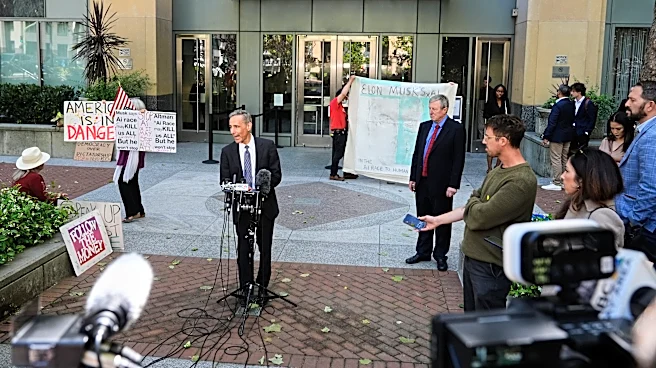

Elon Musk has initiated a lawsuit against OpenAI, questioning the organization's commitment to its founding mission of ensuring AI safety. The legal proceedings, taking place in a federal court in Oakland, California, have brought to light concerns about

OpenAI's shift from a research-focused entity to a product-driven company. Rosie Campbell, a former employee, testified that the company's focus on commercializing AI products has compromised its safety protocols. She highlighted an incident where Microsoft's deployment of OpenAI's GPT-4 model in India occurred without prior evaluation by the company's Deployment Safety Board. This shift in focus has raised questions about the organization's adherence to its original safety commitments. The lawsuit underscores the tension between OpenAI's non-profit board and its for-profit subsidiary, with Musk arguing that the transformation into a major private company violates the founders' implicit agreement.

Why It's Important?

The lawsuit against OpenAI is significant as it highlights the broader implications of AI safety in the context of commercial interests. As AI technologies become increasingly integrated into for-profit enterprises, the balance between innovation and safety becomes crucial. The case raises concerns about the governance structures of AI organizations and the potential need for stronger regulatory oversight. The outcome could influence how AI companies prioritize safety over profitability, impacting stakeholders across the tech industry. The case also underscores the ethical considerations of deploying powerful AI models without adequate safety measures, which could have far-reaching consequences for public trust and the responsible development of AI technologies.

What's Next?

The legal proceedings are expected to continue, with potential implications for OpenAI's operational practices and governance structures. The case may prompt other AI companies to reevaluate their safety protocols and governance models. Additionally, the lawsuit could lead to increased calls for government regulation of AI technologies, particularly in ensuring that safety measures are prioritized over commercial interests. Stakeholders, including tech companies, regulators, and civil society groups, will likely monitor the case closely, as its outcome could set precedents for the future of AI governance and safety standards.

Beyond the Headlines

Beyond the immediate legal battle, the case raises deeper questions about the ethical responsibilities of AI developers. The tension between innovation and safety reflects broader societal concerns about the impact of AI on human rights and societal values. The case could catalyze discussions on the need for transparent and accountable AI development processes, emphasizing the importance of aligning AI technologies with ethical standards. It also highlights the potential risks of concentrating decision-making power in the hands of a few individuals, underscoring the need for diverse and inclusive governance structures in AI organizations.