What's Happening?

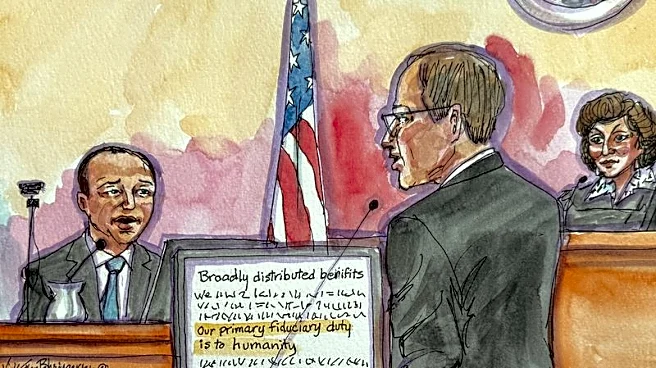

Elon Musk's lawsuit against OpenAI is bringing the company's safety practices under scrutiny. The legal battle focuses on whether OpenAI's for-profit subsidiary aligns with its original mission to ensure AI benefits humanity. Testimonies in a federal

court in Oakland have raised concerns about OpenAI's commitment to AI safety, with former employees testifying that the company's shift towards product development compromised its safety focus. The case has highlighted incidents such as the deployment of GPT-4 in India without proper safety evaluations, raising questions about OpenAI's governance and safety protocols.

Why It's Important?

The lawsuit emphasizes the critical importance of safety in AI development, particularly as AI technologies become more integrated into commercial applications. The case could influence how AI companies prioritize safety and transparency, potentially leading to stronger regulatory oversight. The outcome may also affect public trust in AI technologies and the companies that develop them. As AI continues to evolve, ensuring robust safety measures will be crucial to prevent potential risks and ethical concerns associated with its deployment.

What's Next?

The trial will continue to examine OpenAI's safety practices and governance structure. Depending on the court's findings, OpenAI may face pressure to enhance its safety protocols and transparency. The case could also prompt broader industry discussions about the need for standardized safety measures and regulatory frameworks for AI technologies. Stakeholders, including policymakers and industry leaders, will be closely monitoring the proceedings to assess potential implications for AI governance and safety standards.