What's Happening?

Meta, the parent company of Facebook and Instagram, has introduced a new AI system designed to identify and remove users under the age of 13 from its platforms. This system analyzes photos and videos for 'general themes and visual cues,' such as height

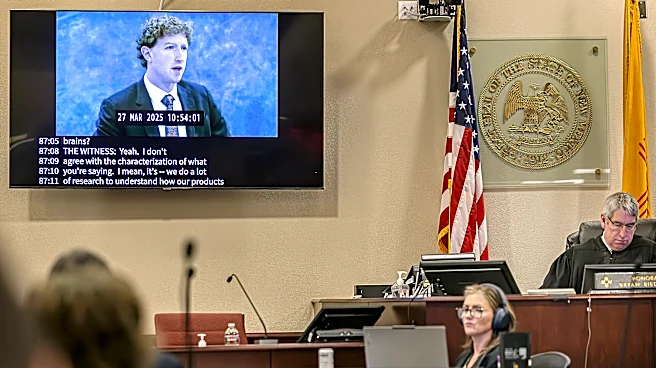

and bone structure, to detect underage users. Meta emphasizes that this is not facial recognition and does not identify specific individuals. The initiative is part of Meta's broader effort to enhance age verification and ensure compliance with platform age restrictions. The AI system is currently operational in select countries, including the U.S., with plans for a wider rollout. Accounts identified as underage will be deactivated, requiring age verification to prevent deletion. This move follows a recent legal ruling in New Mexico where Meta was fined $375 million for failing to protect children from predators on its platforms.

Why It's Important?

The implementation of AI bone structure analysis by Meta highlights the increasing use of technology to enforce age restrictions on social media platforms. This development is significant as it addresses ongoing concerns about the safety of minors online and the effectiveness of age verification processes. By proactively identifying underage users, Meta aims to create a safer environment for children and comply with legal requirements. However, this approach raises questions about privacy and the ethical implications of using AI to analyze personal data. The outcome of this initiative could influence future regulatory measures and the adoption of similar technologies by other social media companies.

What's Next?

As Meta expands the use of this AI technology, it may face scrutiny from privacy advocates and regulatory bodies concerned about data protection and user consent. The company will need to navigate these challenges while demonstrating the effectiveness and accuracy of its system. Additionally, Meta's approach could set a precedent for other platforms, potentially leading to broader industry adoption of AI-driven age verification methods. Stakeholders, including parents, educators, and policymakers, will likely monitor the impact of this initiative on user safety and privacy.