What's Happening?

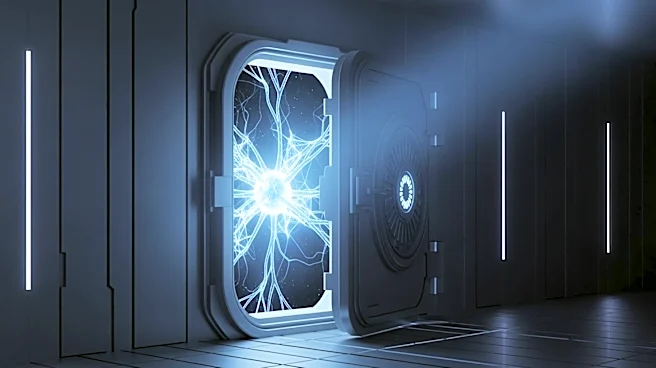

Anthropic, a San Francisco-based technology company, announced it is withholding the release of its new AI model, Mythos, due to significant cybersecurity concerns. The company claims that Mythos is so

advanced that it could potentially exploit vulnerabilities in major operating systems, posing a risk to critical infrastructure. Instead of a public release, Anthropic is providing a preview to select organizations, including tech giants like Google and Microsoft, as part of 'Project Glasswing'. This decision has sparked a debate among AI experts and cybersecurity professionals about the potential risks and the company's motives.

Why It's Important?

The decision to withhold Mythos highlights the growing concerns about the potential misuse of advanced AI technologies. If such powerful AI models fall into the wrong hands, they could be used to compromise essential systems, leading to severe economic and security implications. This situation underscores the need for robust cybersecurity measures and regulatory frameworks to manage the deployment of AI technologies. The involvement of major tech companies in testing Mythos suggests a collaborative approach to addressing these challenges, but also raises questions about the balance between innovation and safety.

What's Next?

As Anthropic continues to test Mythos with select partners, the broader tech community and regulatory bodies will likely scrutinize the outcomes. The results could influence future policies on AI deployment and cybersecurity standards. Additionally, the response from other AI developers and the potential for similar models to emerge will be critical in shaping the industry's direction. Stakeholders, including government agencies and tech companies, may need to collaborate more closely to ensure that AI advancements do not outpace the development of adequate safeguards.