What's Happening?

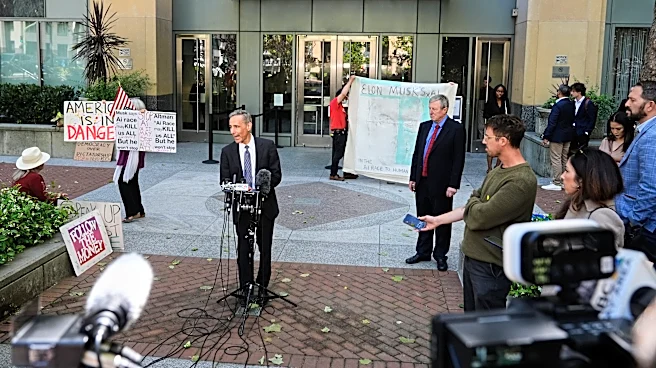

Elon Musk has filed a lawsuit against OpenAI, scrutinizing the organization's safety practices and governance. The lawsuit claims that OpenAI's shift from a research-focused entity to a for-profit company has compromised its commitment to AI safety. Testimonies

in a federal court in Oakland highlighted concerns about the company's internal governance and decision-making processes. Former employees testified that the focus on product development overshadowed safety considerations. The case raises questions about the balance between innovation and safety in AI development, with Musk advocating for stronger regulatory oversight.

Why It's Important?

The lawsuit brings to light critical issues regarding the governance and ethical responsibilities of AI companies. As AI technology becomes more integrated into various sectors, ensuring safety and ethical standards is paramount. The case could set a precedent for how AI companies are regulated and held accountable for their practices. It also emphasizes the need for transparency and robust safety protocols in AI development. The outcome of this lawsuit could influence public policy and industry standards, impacting how AI technologies are developed and deployed in the future.

What's Next?

The court's decision could lead to increased regulatory scrutiny of AI companies, potentially resulting in new guidelines and standards for AI safety. Stakeholders, including policymakers and industry leaders, may push for more stringent oversight to ensure that AI technologies are developed responsibly. The case may also prompt other companies to reevaluate their safety practices and governance structures to avoid similar legal challenges. As the lawsuit progresses, it will be closely watched by those in the tech industry and beyond, as it could have far-reaching implications for the future of AI development.