What's Happening?

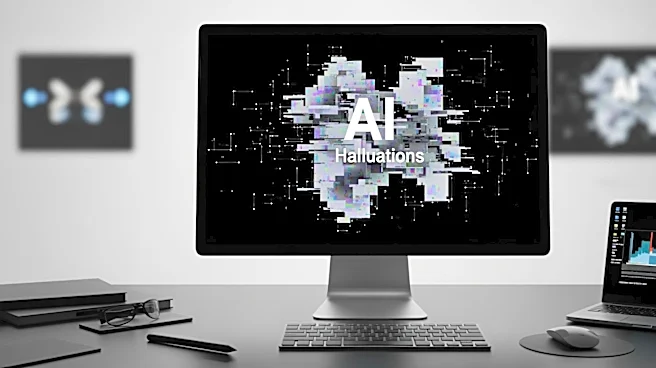

Sullivan & Cromwell, a prominent law firm, has filed an emergency letter to a federal bankruptcy court, seeking leniency after submitting a legal brief containing numerous errors attributed to artificial intelligence (AI) 'hallucinations.' These hallucinations involve

AI tools fabricating case citations and misquoting authorities. The firm admitted that its policies and training on AI usage were not followed, leading to the submission of inaccurate legal documents. The incident occurred in the Chapter 15 case of Prince Global Holdings, a company linked to a forced-labor scam. The firm expressed regret and outlined its comprehensive policies designed to prevent such errors.

Why It's Important?

This incident highlights the challenges and risks associated with integrating AI into legal workflows. As AI tools become more prevalent in the legal industry, ensuring accuracy and reliability remains a significant concern. The errors in Sullivan & Cromwell's filing underscore the need for rigorous human oversight and verification processes, even as AI technology advances. The situation also raises questions about the ethical and professional responsibilities of law firms in managing AI-generated content. The broader legal community may need to reassess its reliance on AI and implement stricter safeguards to prevent similar occurrences.

What's Next?

In response to this incident, Sullivan & Cromwell and other law firms may review and strengthen their AI policies and training programs. The legal industry could see increased scrutiny and regulation regarding the use of AI tools, with potential implications for how legal services are delivered. Clients may demand greater transparency and assurances of accuracy in AI-assisted legal work. Additionally, the development of new tools to detect and correct AI-generated errors may become a priority, as firms seek to maintain their reputations and avoid sanctions.