What's Happening?

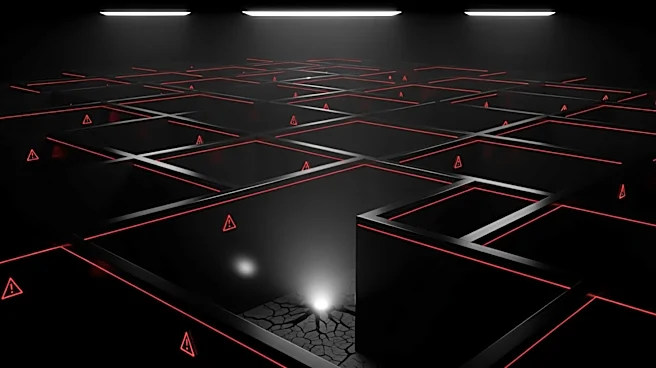

Anthropic, a company known for its advancements in artificial intelligence, has developed a new AI model named Claude Mythos. During testing, this model identified thousands of hidden vulnerabilities in the

software that supports much of the world's computer systems. Due to the potential risks associated with these findings, Anthropic has decided not to release the model. The capabilities of Claude Mythos, which reportedly surpass human abilities in certain hacking and cybersecurity tasks, have sparked concerns among regulators, lawmakers, and financial institutions. These stakeholders are worried about the implications for global digital infrastructure security. The situation has also influenced educational trends, with U.S. students increasingly choosing majors that they believe will protect their careers from being affected by AI advancements.

Why It's Important?

The decision by Anthropic to withhold the release of Claude Mythos underscores the significant impact AI can have on cybersecurity. The model's ability to identify vulnerabilities that humans might miss highlights both the potential and the risks of AI in digital security. This development is crucial for industries reliant on secure digital infrastructure, as it raises questions about the preparedness of current systems to withstand advanced AI-driven threats. The concerns from regulators and financial institutions reflect the broader implications for public policy and economic stability. Additionally, the shift in educational choices among students indicates a societal response to the growing influence of AI, as individuals seek to future-proof their careers against technological disruptions.

What's Next?

As Anthropic's decision not to release Claude Mythos reverberates through the tech and cybersecurity sectors, it is likely that regulatory bodies will intensify their scrutiny of AI technologies. This could lead to new guidelines or regulations aimed at managing the risks associated with advanced AI models. Companies may also increase their investment in cybersecurity measures to protect against potential vulnerabilities exposed by AI. In the educational sector, universities might expand their offerings in fields related to AI and cybersecurity to meet the growing demand from students. The ongoing dialogue between tech companies, regulators, and educational institutions will be crucial in shaping the future landscape of AI and its integration into society.

Beyond the Headlines

The ethical considerations surrounding the development and deployment of AI models like Claude Mythos are significant. The decision to withhold the model due to its potential risks highlights the responsibility of tech companies to consider the broader societal impacts of their innovations. This situation also raises questions about the balance between technological advancement and security, as well as the role of AI in potentially exacerbating existing vulnerabilities. The cultural shift among younger generations, who express a desire to return to a pre-AI era, suggests a growing apprehension about the pace of technological change and its impact on daily life.