What's Happening?

AI-driven wellness planners are emerging as tools to assist with lower-severity mental health needs, such as processing emotions and practicing conversations. These tools offer general psychoeducation

and can be likened to a 'study buddy' rather than a therapist. However, they are not suitable for addressing higher-risk symptoms or crises, where human intervention is necessary. The use of AI in mental health is growing, with surveys indicating that a significant percentage of adolescents and young adults are turning to AI for mental health advice. Despite the potential benefits, there are concerns about AI's ability to miss warning signs of mental health distress and foster misplaced trust. Experts emphasize the need for educational institutions to understand AI's role in mental health and to provide guidance on its appropriate use.

Why It's Important?

The integration of AI in mental health support has significant implications for how mental health services are delivered, particularly in educational settings. AI tools can increase accessibility to mental health resources, especially for those who may not seek traditional therapy. However, the reliance on AI for mental health support raises ethical and safety concerns, as AI may not adequately address complex mental health issues. The potential for AI to miss critical warning signs and the risk of users forming emotional dependencies on AI highlight the need for careful oversight and regulation. Educational institutions and mental health professionals must navigate these challenges to ensure that AI is used responsibly and effectively, complementing rather than replacing human support.

What's Next?

As AI continues to be integrated into mental health support, educational institutions and mental health professionals will need to develop guidelines and training to ensure its responsible use. This includes educating students and staff about the capabilities and limitations of AI in mental health, as well as establishing protocols for when human intervention is necessary. Additionally, there may be increased advocacy for regulatory oversight to ensure that AI tools are safe and effective. Institutions may also explore partnerships with AI developers to create tools that are specifically designed to support mental health in educational settings.

Beyond the Headlines

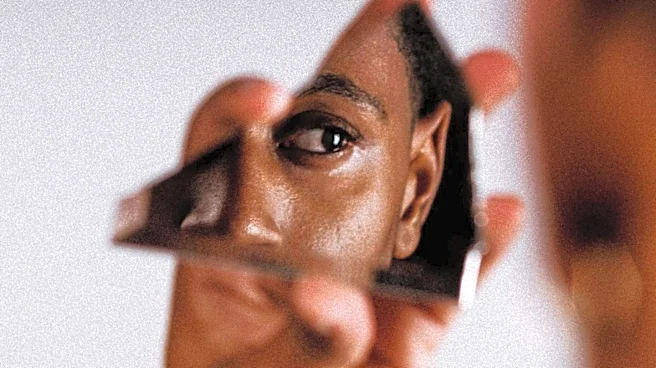

The use of AI in mental health raises broader ethical questions about the role of technology in human relationships and the potential for AI to exacerbate feelings of isolation. As AI becomes more integrated into daily life, there is a risk that individuals may rely on technology for emotional support, potentially diminishing the value of human interaction. This trend underscores the importance of fostering digital literacy and critical thinking skills, enabling individuals to navigate the complexities of AI and mental health responsibly. The conversation around AI and mental health also highlights the need for ongoing research to understand the long-term impacts of AI on mental well-being.