What's Happening?

Meta has announced the use of AI to analyze photos and videos for visual clues, such as height and bone structure, to identify users under 13 on Facebook and Instagram. This initiative is part of Meta's

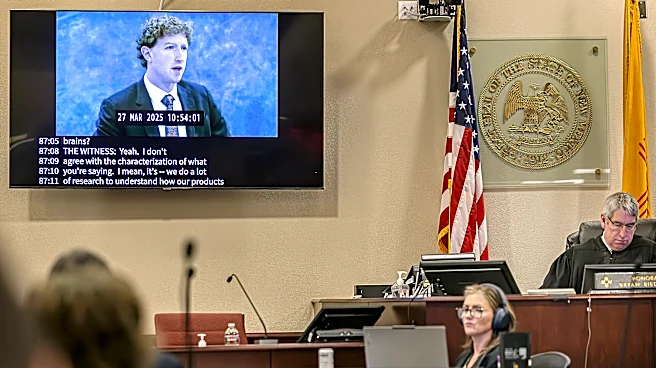

efforts to enhance child safety on its platforms. The AI system, which is not facial recognition, aims to increase the detection and removal of underage accounts. This move follows a recent legal ruling in New Mexico, where Meta was fined $375 million for misleading consumers about platform safety. The company is also expanding its 'Teen Accounts' feature to more regions, providing stricter safeguards for younger users.

Why It's Important?

Meta's use of AI to enforce age restrictions reflects growing concerns about child safety on social media. This technology could significantly impact how platforms manage user age verification, potentially setting a precedent for other tech companies. The initiative also highlights the challenges of balancing user privacy with safety measures. As Meta faces legal and regulatory pressures, its actions could influence industry standards and prompt further scrutiny of tech companies' responsibilities in protecting minors online.