What's Happening?

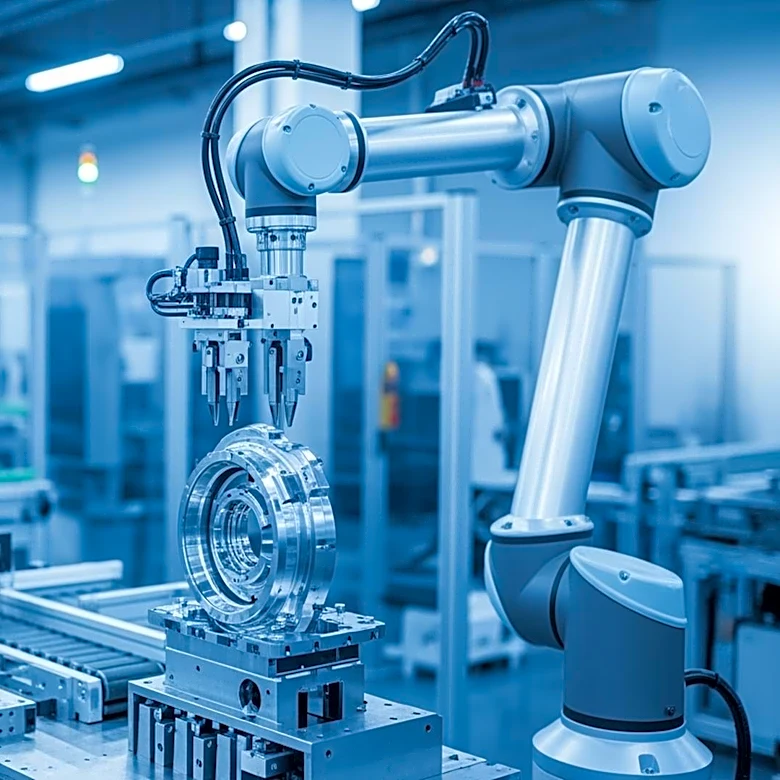

Anthropic has unveiled its latest AI model, Claude Mythos Preview, which is reportedly capable of identifying security vulnerabilities that have gone undetected for decades. The model has sparked significant concern, leading to emergency meetings among

top financial and governmental leaders. Despite its potential, the model is not yet publicly available and is being tested by a select group of enterprise partners under Project Glasswing. The model's ability to uncover zero-day vulnerabilities across major operating systems has been highlighted, although some experts caution against taking Anthropic's claims at face value without further evidence.

Why It's Important?

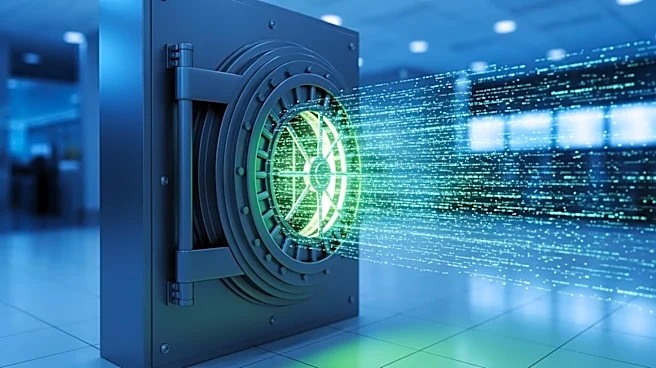

The capabilities of Anthropic's Mythos model highlight the dual-edged nature of AI in cybersecurity. While the model's ability to detect vulnerabilities could enhance security measures, it also poses a significant threat if misused. The model's potential to disrupt major systems underscores the need for stringent security protocols and responsible AI deployment. The situation reflects broader industry trends where AI advancements are marketed as both revolutionary and potentially dangerous, raising questions about the balance between innovation and security. The response from industry leaders and policymakers will be crucial in shaping the future of AI in cybersecurity.

Beyond the Headlines

The marketing strategies employed by AI companies, including Anthropic, reveal a complex narrative where products are simultaneously portrayed as groundbreaking and hazardous. This approach raises ethical considerations about the portrayal of AI technologies and their impact on public perception. The emphasis on the potential dangers of AI models may influence regulatory frameworks and public trust in AI systems. As AI continues to evolve, the industry must navigate these challenges to ensure that technological advancements are aligned with societal values and security needs.