What's Happening?

A jury in Los Angeles has found Meta and YouTube liable for creating products that led to harmful and addictive behavior among young users. The case was brought by a plaintiff identified as Kaley, who claimed that her use of YouTube and Instagram from

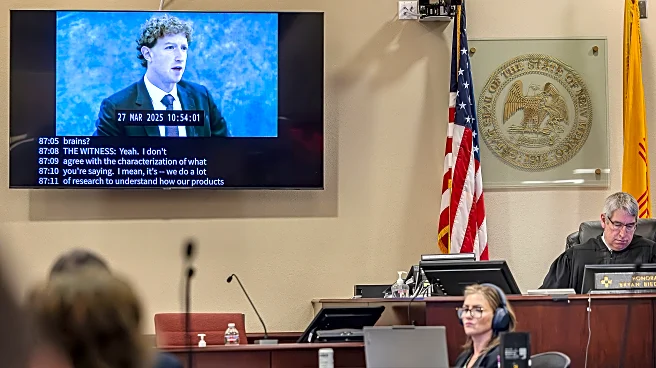

a young age resulted in addictive behavior and contributed to mental health issues such as depression and body dysmorphia. The jury awarded $3 million in damages to Kaley, marking a significant legal precedent for similar cases against social media companies. The trial involved testimony from Meta CEO Mark Zuckerberg and Instagram head Adam Mosseri, drawing parallels to tobacco industry lawsuits from the 1990s. The case focused on the design of the apps rather than the content, challenging the protections offered by Section 230 of the Communications Decency Act.

Why It's Important?

This verdict is significant as it challenges the legal protections that social media companies have traditionally relied upon, specifically Section 230, which shields them from liability for user-generated content. By focusing on the design of the platforms, this case could pave the way for more lawsuits targeting the addictive nature of social media products. The decision may prompt tech companies to reevaluate their product designs and implement more robust safety measures to protect young users. It also highlights growing concerns about the mental health impacts of social media, potentially influencing public policy and regulatory approaches to tech industry practices.

What's Next?

Meta has expressed its intention to appeal the decision, indicating that the legal battle may continue. The outcome of this case could influence future litigation against social media companies, encouraging more plaintiffs to come forward with similar claims. Additionally, the verdict may lead to increased scrutiny from regulators and lawmakers, potentially resulting in new regulations aimed at curbing the addictive nature of social media platforms. Companies may also face pressure to enhance transparency and accountability regarding their product designs and the impact on users' mental health.