What's Happening?

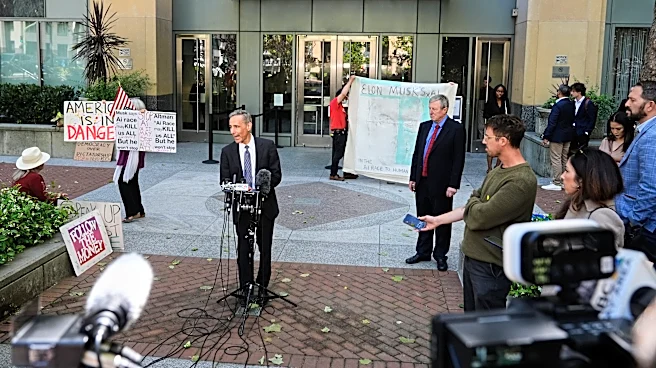

Elon Musk has taken Sam Altman, a rival in the artificial intelligence sector, to court, revealing insights into their professional and personal dynamics. During the trial, Musk explained his initial financial support for OpenAI, a nonprofit focused on AI development,

as a counterbalance to Google's AI efforts, which he perceived as lacking in safety considerations. Musk expressed concerns about AI potentially leading to catastrophic outcomes if mishandled. However, when Musk left OpenAI to pursue his own AI venture, he reportedly shifted his focus away from safety to compete with Google's AI lab, DeepMind. This shift was highlighted by OpenAI President Greg Brockman, who testified that Musk prioritized catching up to DeepMind over AI safety, likening the situation to a battle between 'wolves' and 'sheep'.

Why It's Important?

The courtroom clash between Musk and Altman underscores the ongoing debate over AI safety and the ethical responsibilities of tech leaders. Musk's initial support for OpenAI was driven by a desire to ensure AI development was conducted safely, reflecting broader concerns about the potential risks of AI technologies. The trial highlights the tension between rapid technological advancement and the need for safety protocols. This case could influence public and industry perceptions of AI development, potentially impacting regulatory approaches and investment strategies in the tech sector. Stakeholders in AI and technology industries are closely watching the outcome, as it may set precedents for how AI safety is prioritized in future projects.

What's Next?

The trial's outcome could have significant implications for AI development and safety standards. If Musk's approach is validated, it may encourage other tech leaders to prioritize competitive advancement over safety, potentially leading to increased regulatory scrutiny. Conversely, if Altman's perspective gains traction, it could bolster efforts to implement stricter safety measures in AI research. The tech industry, policymakers, and AI researchers are likely to respond to the trial's findings, potentially influencing future AI policies and collaborations. The case may also prompt discussions about the ethical responsibilities of tech leaders in balancing innovation with safety.