What's Happening?

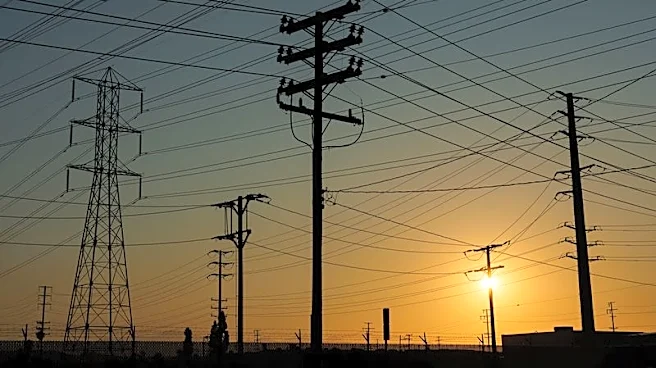

Nvidia is addressing the energy demands of AI technology by planning to establish mini data centers near local power substations in the U.S. This initiative aims to manage the power load more efficiently by utilizing spare capacity at substations. The

plan involves creating approximately 25 small data centers that can adjust their compute power based on the varying demands of different substations. This approach is not about reducing power consumption but rather about balancing the load across the power grid. Nvidia's strategy includes building more GPUs to support these smaller data centers, which could help mitigate the anticipated increase in power consumption by data centers, projected to reach 17% of U.S. electricity generation by 2030.

Why It's Important?

The establishment of mini data centers by Nvidia is significant as it addresses the growing energy demands of AI technology, which is expected to consume a substantial portion of electricity in the coming years. By optimizing the use of existing power infrastructure, Nvidia's approach could help prevent potential power shortages and ensure the sustainability of AI advancements. This move also highlights the increasing importance of energy efficiency in the tech industry, as companies seek to balance technological growth with environmental considerations. Additionally, Nvidia's strategy could set a precedent for other tech companies facing similar energy challenges.

What's Next?

Nvidia's pilot project for mini data centers is expected to commence later this year. The success of this initiative could lead to a broader implementation across the U.S., potentially influencing other tech companies to adopt similar strategies. Stakeholders, including energy providers and tech companies, may closely monitor the outcomes to assess the feasibility and scalability of such solutions. Furthermore, regulatory bodies might consider new policies to support innovative energy management practices in the tech industry.