What's Happening?

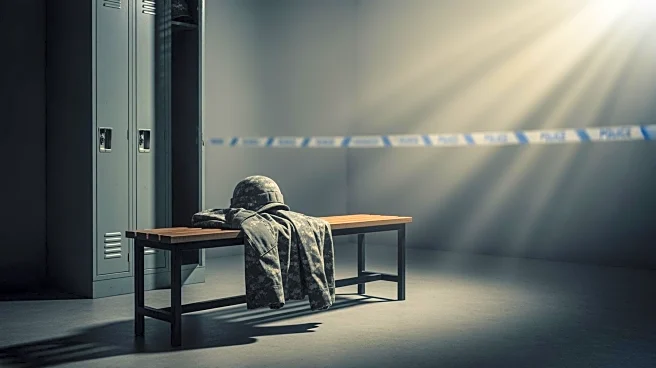

Tensions have escalated between the Pentagon and Anthropic, an AI company known for its Claude chatbot system, over the use of AI in military operations. Anthropic, which has a defense contract worth up to $200 million, has been involved in providing AI services for national security purposes. The conflict arose after reports suggested that Anthropic's AI was used in a military operation to capture Venezuelan President Nicolás Maduro. Although Anthropic has not confirmed the use of its AI in this operation, the incident has raised concerns within the company about the potential use of its technology in military contexts. Anthropic has maintained that its AI systems should not be used in lethal autonomous weapons or domestic surveillance, which contrasts

with the Pentagon's recent strategy to eliminate company-specific constraints on AI use.

Why It's Important?

The disagreement highlights the broader debate over the ethical use of AI in military applications. As AI technology becomes increasingly integrated into defense strategies, companies like Anthropic face pressure to balance their ethical guidelines with government demands. The Pentagon's push for unrestricted use of AI systems could set a precedent for future contracts, potentially impacting how AI is deployed in military operations. This situation underscores the challenges AI companies face in maintaining ethical standards while fulfilling government contracts, which could influence public perception and regulatory policies surrounding AI in defense.

What's Next?

The Pentagon is reviewing its relationship with Anthropic, and ongoing negotiations may determine the future of their collaboration. The outcome could influence how AI companies structure their contracts with the military, particularly regarding ethical constraints. As the Pentagon seeks to incorporate AI into its operations without limitations, Anthropic and similar companies may need to reassess their policies to align with government expectations while maintaining their ethical commitments. The resolution of this conflict could have significant implications for the role of AI in national security and the ethical considerations surrounding its use.