What's Happening?

Meta has announced the implementation of artificial intelligence to analyze users' height and bone structure to determine if they are underage, specifically under 13 years old, on its platforms Facebook and Instagram. This initiative is part of Meta's

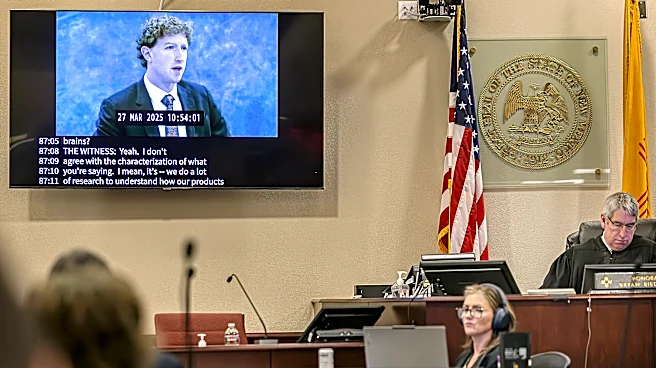

broader efforts to enhance child safety by identifying and removing underage accounts. The AI system, which is not facial recognition, uses visual cues and contextual analysis of user profiles to estimate age. This move follows a recent legal ruling where a New Mexico jury ordered Meta to pay $375 million in civil penalties for misleading consumers about platform safety and endangering children. Meta has also threatened to shut down its services in New Mexico in response to the ruling. The company plans to expand this AI technology to more features within its apps, including Instagram Live and Facebook Groups.

Why It's Important?

The introduction of AI for age verification by Meta is significant as it addresses growing concerns about child safety on social media platforms. This development could set a precedent for other tech companies to adopt similar measures, potentially leading to industry-wide changes in how underage users are managed online. The legal challenges Meta faces highlight the increasing scrutiny on tech giants regarding user safety and data privacy. The financial penalties and potential service shutdowns in New Mexico underscore the serious implications of failing to protect young users. This situation could influence public policy and regulatory approaches towards social media companies, emphasizing the need for robust safety measures and transparent practices.

What's Next?

Meta's AI system is currently operational in select countries, with plans for a broader rollout. The company is also expanding its 'Teen Accounts' feature, which provides stricter account settings for teenagers, to more countries, including the U.S. This expansion indicates Meta's commitment to enhancing user safety, though it may face further legal and regulatory challenges. Stakeholders, including parents, regulators, and child safety advocates, will likely monitor these developments closely. The outcome of Meta's legal battles and the effectiveness of its new safety measures could influence future regulations and industry standards.