What's Happening?

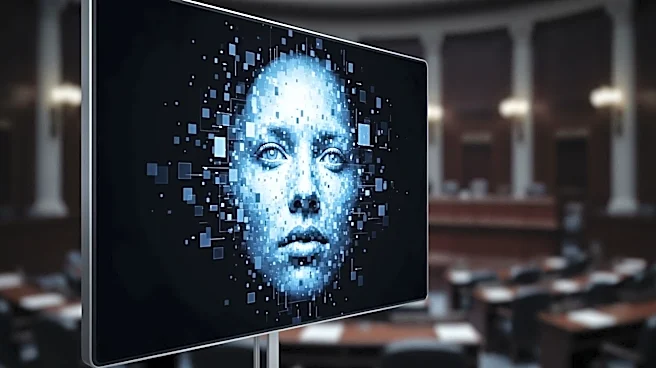

YouTube is enhancing its efforts to combat deepfakes by expanding a tool that allows users to register their likeness, receive notifications when it is detected in unauthorized content, and request its removal. This initiative is part of a broader effort to address

the challenges posed by deepfakes, which are AI-generated videos that can be misleading and potentially harmful. The tool's expansion comes as Congress considers the NO FAKES Act, a bipartisan bill aimed at regulating deepfakes while balancing free speech rights. Currently, there is no specific federal law addressing deepfakes, leaving platforms like YouTube to navigate a complex legal landscape. The lack of clear legal guidelines has resulted in platforms becoming de facto arbiters of content, often leading to challenges in distinguishing between harmful deepfakes and protected speech such as parody or satire.

Why It's Important?

The expansion of YouTube's deepfake detection tool is significant as it addresses the growing concern over the misuse of AI technology to create deceptive content. Deepfakes pose a threat to privacy, reputation, and can be used for malicious purposes, including misinformation and defamation. The absence of comprehensive legal frameworks leaves platforms vulnerable to exploitation by deepfakers, while also risking over-censorship of legitimate content. The NO FAKES Act seeks to provide a legal structure to manage these issues, but it has raised concerns about potential overreach and the suppression of free speech. The outcome of these legislative efforts will have implications for how digital platforms manage content and protect users' rights, impacting both the tech industry and individual users.

What's Next?

As Congress continues to deliberate on the NO FAKES Act, stakeholders are closely watching for updates that might address concerns about over-censorship and the protection of free speech. An updated version of the bill is expected to introduce a counternotice system to contest takedowns, which could provide a more balanced approach to managing deepfake content. Meanwhile, YouTube and other platforms will need to continue refining their tools and policies to effectively manage deepfakes without infringing on users' rights. The ongoing development of AI technology and its applications will likely prompt further legislative and regulatory responses in the future.

Beyond the Headlines

The struggle to regulate deepfakes highlights broader issues related to the rapid advancement of technology and the lag in corresponding legal frameworks. This gap creates challenges not only for platforms but also for individuals whose likenesses may be used without consent. The ethical implications of deepfakes extend to questions of identity, consent, and the potential for AI to be used in harmful ways. As technology continues to evolve, society will need to grapple with these issues and develop solutions that protect individuals while fostering innovation.