What's Happening?

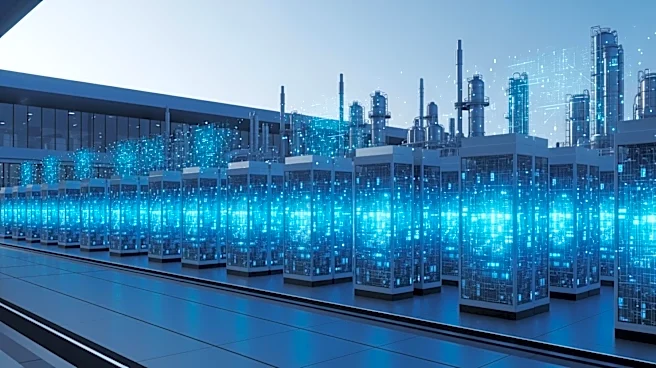

In the Nevada desert, construction is underway for a new hyperscale data center campus, marking a significant development in AI infrastructure. This expansion is part of a broader trend towards edge computing, which involves distributing computational

resources closer to where data is generated. This approach contrasts with traditional centralized data centers, which require significant power and are often located far from data sources. The shift is driven by the need to reduce latency, manage energy demands, and comply with regulatory requirements. Edge computing allows for faster data processing by reducing the distance data must travel, which is crucial for applications like autonomous vehicles and smart manufacturing.

Why It's Important?

The move towards edge computing represents a fundamental change in how data infrastructure is developed and utilized. By decentralizing data processing, companies can achieve lower latency and better align with local energy resources, which is increasingly important as power availability becomes a limiting factor for large data centers. This shift also addresses regulatory challenges, as many regions now require data to be processed within national borders. The transition to edge computing could lead to more efficient and sustainable data management practices, potentially reshaping the landscape of digital infrastructure investment.

What's Next?

As the demand for edge computing grows, more companies are likely to invest in smaller, regional data centers. This could lead to increased competition in the data infrastructure market and drive innovation in energy-efficient technologies. Regulatory bodies may also update policies to better accommodate the unique challenges and opportunities presented by edge computing. Stakeholders, including technology companies and investors, will need to adapt to these changes to capitalize on the emerging opportunities in this evolving sector.