What's Happening?

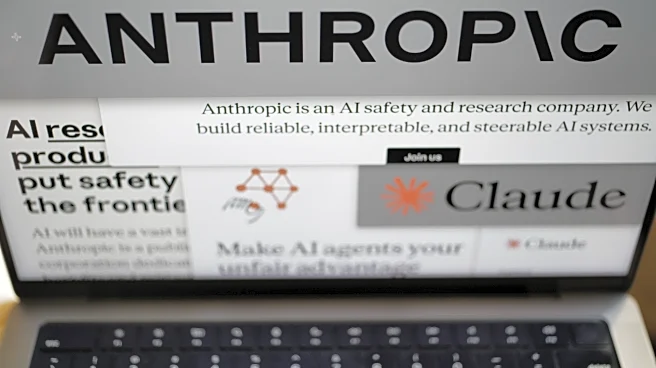

Defense Secretary Pete Hegseth is pressuring Anthropic, an AI company, to remove restrictions on its AI model Claude, which is used for military applications. Anthropic has stipulated that its technology should not be used for mass surveillance or lethal

autonomous weapons. Hegseth has threatened to invoke the Defense Production Act to compel compliance or label Anthropic a supply-chain risk. This dispute highlights the tension between private tech companies and government demands, as the Pentagon seeks to use AI technology without constraints. Anthropic's stance reflects concerns about ethical AI use, while the Pentagon prioritizes national security.

Why It's Important?

The conflict between Anthropic and the Pentagon underscores the broader debate over government control of AI technology. As AI becomes integral to national defense, the balance between ethical considerations and security needs becomes increasingly complex. The outcome of this dispute could influence how AI is governed and utilized by the military, potentially setting a precedent for other tech companies. The situation also reflects the changing relationship between Silicon Valley and the government, as tech firms become more entwined with national security interests.

What's Next?

Anthropic faces a deadline to comply with Pentagon demands, with potential consequences for its business and reputation. The company must decide whether to adhere to its ethical guidelines or risk government intervention. The resolution of this dispute could impact Anthropic's valuation and its role in the AI industry. The broader implications for AI governance and military applications remain uncertain, as stakeholders navigate the intersection of technology, ethics, and national security.