What's Happening?

The U.S. federal government has quietly removed its AI hiring guidance from the Equal Employment Opportunity Commission (EEOC) website, leaving a gap in federal oversight as states enact their own AI employment laws. The EEOC's guidance, which was non-binding,

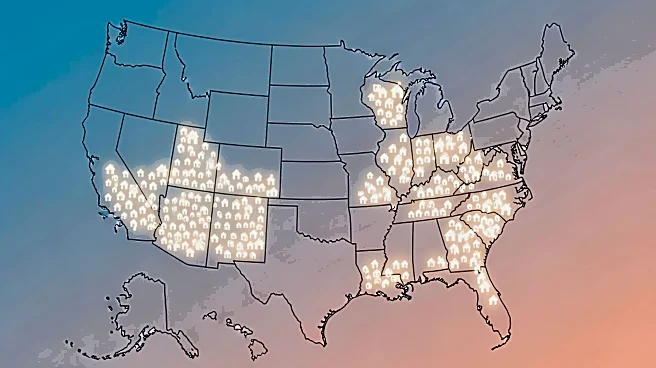

provided technical assistance on how existing employment laws apply to AI tools. Despite the removal, the legal obligations under Title VII of the Civil Rights Act of 1964 remain unchanged, prohibiting both disparate treatment and disparate impact in employment. Meanwhile, states like California, Illinois, Texas, and Colorado have introduced their own AI employment regulations, each with distinct legal standards. California's regulations, effective October 2025, apply a disparate impact framework and extend liability to AI vendors. Illinois allows private action for AI discrimination, while Texas requires proof of intent to discriminate. Colorado mandates a 'reasonable care' standard for high-risk AI systems, offering an affirmative defense for compliance with the NIST AI Risk Management Framework.

Why It's Important?

The removal of federal guidance on AI hiring tools creates a regulatory vacuum, increasing the complexity for businesses operating across multiple states. Employers must now navigate a patchwork of state laws, each with unique compliance requirements. This situation could lead to increased legal risks and operational challenges, particularly for companies using AI in employment decisions. The divergence in state laws highlights the absence of a unified federal framework, potentially leading to inconsistent enforcement and legal uncertainty. Businesses may face significant liabilities if they fail to comply with varying state standards, especially as states like Illinois allow private lawsuits for AI-related discrimination. The evolving legal landscape underscores the need for robust compliance programs and due diligence in AI vendor relationships.

What's Next?

Employers using AI in hiring must adapt to the changing regulatory environment by implementing comprehensive compliance strategies tailored to each state's laws. They should document AI tool usage, conduct bias testing, and ensure vendor accountability. The ongoing Mobley v. Workday case, which questions AI vendor liability for discriminatory outcomes, could further influence how companies manage AI tools. A ruling against Workday could set a precedent, reshaping employer-vendor relationships nationwide. Additionally, businesses should monitor developments in state legislation and court rulings to stay compliant and mitigate legal risks. The absence of federal guidance may prompt further state-level initiatives, potentially leading to more fragmented regulations.

Beyond the Headlines

The removal of the EEOC's AI guidance reflects broader shifts in federal policy under new leadership, potentially deprioritizing AI-related employment discrimination. This change may influence how AI tools are perceived and regulated, affecting innovation and adoption in the industry. The lack of federal oversight could also impact public trust in AI systems, especially if state laws fail to address concerns about bias and fairness effectively. As AI continues to transform the workforce, ethical considerations around transparency, accountability, and equity will remain critical. The evolving legal landscape may drive businesses to adopt voluntary frameworks like the NIST AI RMF to demonstrate responsible AI use.