What's Happening?

Meta is deploying new artificial intelligence technology on its platforms, Facebook and Instagram, to identify users under the age of 13. This system estimates age by analyzing visual cues such as height

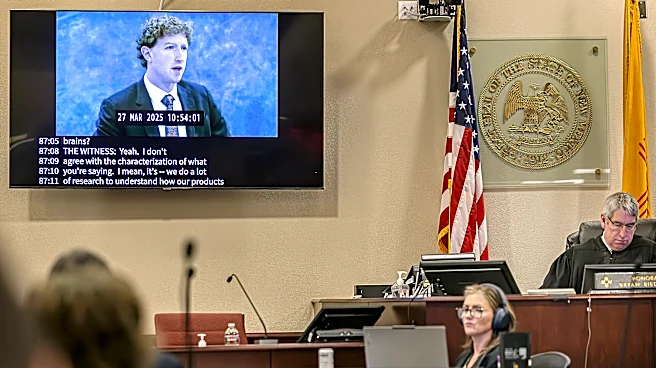

and bone structure, rather than using facial recognition. The AI also considers contextual information from text messages and user interactions, like birthday celebrations or school grade mentions. If a user is identified as underage, their account is temporarily restricted until identity verification documents are provided. This initiative follows a court ruling in New Mexico that fined Meta $375 million for misleading consumers about platform safety. Additionally, Meta is expanding its 'Teen Accounts' feature on Instagram to 27 countries, enhancing safety measures for teens by restricting messages to known contacts, hiding harmful comments, and setting accounts to private mode automatically.

Why It's Important?

The implementation of AI to identify underage users is significant as it addresses growing concerns about child safety on social media platforms. By proactively identifying and restricting underage accounts, Meta aims to comply with legal requirements and enhance user safety. This move is particularly important in light of the recent $375 million fine imposed by a New Mexico court, highlighting the legal and financial risks associated with inadequate safety measures. The expansion of the 'Teen Accounts' feature further underscores Meta's commitment to protecting younger users, which could improve public perception and trust in the platform. However, the use of AI for age estimation raises privacy concerns and potential challenges related to accuracy and user consent.

What's Next?

Meta's initiative may prompt other social media companies to adopt similar measures to enhance child safety and comply with legal standards. The effectiveness of the AI system in accurately identifying underage users will be closely monitored, and any shortcomings could lead to further legal scrutiny or regulatory action. Additionally, the expansion of safety features to more countries suggests a global strategy to standardize user protection measures. Stakeholders, including parents, regulators, and privacy advocates, will likely continue to scrutinize Meta's approach to balancing safety with privacy rights.