Optical character recognition (OCR) technology is a complex field that involves various technical processes and algorithms to convert images of text into machine-readable data. This article explores the technical aspects of OCR, including the algorithms and techniques that underpin its functionality, providing insight into how this technology achieves its impressive capabilities.

Core Algorithms and Techniques

At the heart of OCR technology are two primary types of algorithms: matrix

matching and feature extraction. Matrix matching, also known as pattern matching, involves comparing an image to a stored glyph on a pixel-by-pixel basis. This technique is effective for typewritten text but struggles with new fonts or distorted images. Feature extraction, on the other hand, decomposes glyphs into features like lines and loops, making the recognition process more efficient and adaptable to various fonts and styles.

Modern OCR systems often employ a two-pass approach to character recognition. The first pass identifies letter shapes with high confidence, while the second pass, known as adaptive recognition, uses this information to improve accuracy for the remaining letters. This method is particularly useful for handling unusual fonts or low-quality scans, where the text may be blurred or faded.

Pre-processing and Post-processing Techniques

OCR software typically includes pre-processing steps to enhance the chances of successful recognition. These steps may involve image segmentation, where the text is divided into individual characters or words, and noise reduction, which removes unwanted artifacts from the image. For fixed-pitch fonts, segmentation is straightforward, but proportional fonts require more sophisticated techniques due to varying whitespace between characters.

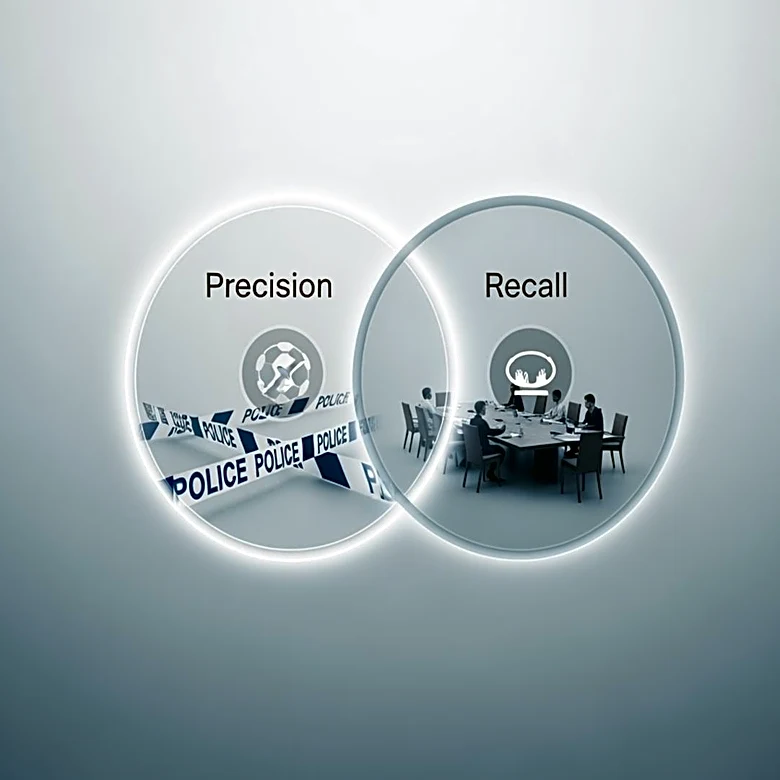

Post-processing techniques further refine the OCR output. These may include using a lexicon to constrain the recognized text to a list of allowed words, improving accuracy by reducing the likelihood of nonsensical outputs. Additionally, algorithms like the Levenshtein Distance can be used to correct errors by comparing the OCR output to known words or phrases.

Advances in Machine Learning and Neural Networks

Recent advancements in machine learning and neural networks have significantly enhanced OCR capabilities. Systems like Tesseract and OCRopus utilize neural networks trained to recognize entire lines of text, rather than focusing on individual characters. This approach improves accuracy and allows for the recognition of more complex scripts and languages.

The integration of machine learning has also enabled OCR systems to adapt to new fonts and styles more effectively. By training on large datasets, these systems can learn to recognize a wide variety of text inputs, making them more versatile and robust in real-world applications.

As OCR technology continues to advance, the technical processes and algorithms that drive it will likely become even more sophisticated, further expanding its capabilities and applications. From improving accuracy to handling diverse languages and scripts, the technical evolution of OCR remains a dynamic and exciting field.