Intrinsic motivation, a concept often associated with human psychology, has found its way into the realm of artificial intelligence (AI) and robotics. This approach focuses on enabling machines to learn and adapt through curiosity-driven behaviors, much like humans do when they explore for the sake of learning itself. In AI, intrinsic motivation is used to develop agents that can autonomously learn and improve their performance in various tasks without

relying solely on external rewards.

Intrinsic Motivation in AI: A New Frontier

In the context of AI, intrinsic motivation is about creating systems that can learn and adapt by exploring their environments. This is akin to how humans engage in activities for the sheer joy or challenge they present, rather than for external rewards. In AI, this concept is applied to develop agents that can learn general skills or behaviors that improve their performance in tasks like resource acquisition. This approach is particularly useful in scenarios where external rewards are not clearly defined or are difficult to implement.

The application of intrinsic motivation in AI is often studied within the framework of computational reinforcement learning. Here, the rewards that drive agent behavior are derived from the environment itself, rather than being imposed externally. This allows AI systems to develop policies or strategies based on the distribution of rewards they encounter, leading to more autonomous and adaptable learning processes.

Curiosity and Exploration in AI

Curiosity and exploration are key components of intrinsically motivated AI systems. These systems are designed to explore their environments to reduce uncertainty and learn about the dynamics of their surroundings. This is achieved by encouraging the agent to explore as much of the environment as possible, which helps in learning the transition functions and reward structures of the environment.

Intrinsic motivation in AI encourages agents to seek out novel experiences and information-rich areas of their environment. This approach has been shown to lead to faster learning and more efficient exploration, as agents are driven by the inherent satisfaction of discovering new information rather than external rewards.

Challenges and Future Directions

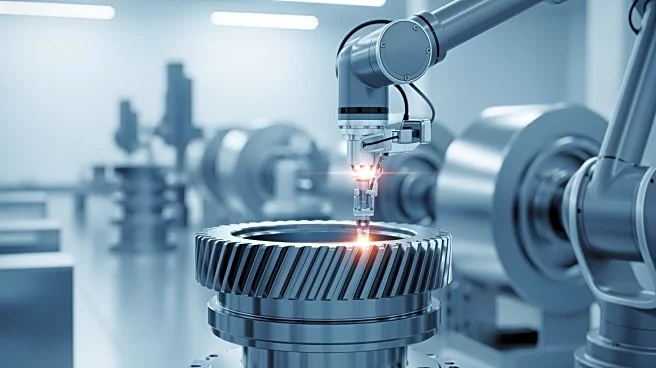

Despite the promising applications of intrinsic motivation in AI, there are challenges that need to be addressed. One of the main issues is the ability of AI systems to generalize their learning across different tasks and environments. While intrinsic motivation can drive exploration and learning, the challenge lies in reusing learned policies and compressing complex state spaces into meaningful representations.

Future research in this area aims to overcome these challenges by developing more sophisticated models of intrinsic motivation that can better mimic human-like learning processes. By doing so, AI systems can become more versatile and capable of adapting to a wider range of tasks and environments, ultimately leading to more autonomous and intelligent machines.