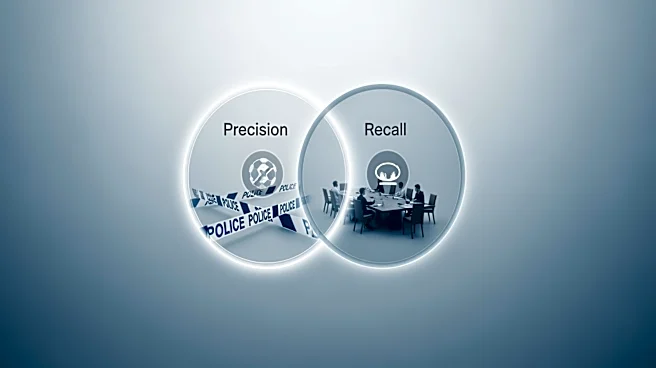

Information retrieval systems, such as search engines, rely heavily on precision and recall to evaluate their effectiveness. These metrics help determine how well a system retrieves relevant information from a vast collection of data. By understanding precision and recall, developers can optimize their systems to provide users with accurate and comprehensive search results.

Precision in Information Retrieval

Precision in information retrieval refers to the fraction of relevant instances

among the retrieved instances. It is calculated as the number of relevant retrieved instances divided by the total number of retrieved instances. Precision is crucial for ensuring that users receive accurate search results that match their queries.

For example, a search engine that retrieves 30 relevant documents out of 100 total documents has a precision of 0.3. This means that 30% of the retrieved documents are relevant to the user's query. High precision is essential for user satisfaction, as it reduces the number of irrelevant results and helps users find the information they need quickly.

Recall in Information Retrieval

Recall in information retrieval measures the fraction of relevant instances that were retrieved. It is calculated as the number of relevant retrieved instances divided by the total number of relevant instances in the collection. Recall is important for ensuring that users receive comprehensive search results that cover all relevant information.

Consider a search engine that retrieves 30 relevant documents out of 50 total relevant documents in the collection. The recall would be 0.6, indicating that 60% of the relevant documents were retrieved. High recall is crucial for providing users with a complete view of the available information, especially in research or academic settings where thoroughness is valued.

Balancing Precision and Recall in Information Retrieval

Achieving a balance between precision and recall is a common challenge in information retrieval systems. High precision may lead to fewer results, while high recall may result in more irrelevant results. Developers must consider the specific needs of their users and the context in which the system operates to determine the optimal balance.

For instance, a search engine designed for academic research may prioritize recall to ensure comprehensive coverage of relevant literature. In contrast, a search engine for consumer products may emphasize precision to provide users with accurate product recommendations. By understanding the trade-offs between precision and recall, developers can tailor their systems to meet user expectations and improve overall performance.

In summary, precision and recall are vital metrics for evaluating information retrieval systems. By optimizing these metrics, developers can enhance the accuracy and completeness of search results, ultimately improving user satisfaction and system effectiveness.