The

world of social media and technological boom is taking us to loneliness. This is something that has become a common notion today, and people are turning to AI chatbots for advice. But what if there is a 25 per cent chance that you will not receive the right advice, especially if it is related to relationships? Anthropic has published a study that found thousands of people are turning to its Claude chatbot for personal life decisions. The tech company analysed the chats of nearly one million users between March and April 2026. Here’s everything the study found:

Most Advice Queries Focus On These Four Key Areas

As per the

Anthropic study, Claude users were not just using it for information but for decision-making in real life. Out of nearly 38,000 guidance-related chats, nearly 75 per cent of users were concentrated in just four categories. The conversations with Claude AI related to health and wellness accounted for 27 per cent, followed by career and professional queries at 26 per cent. While the relationship-related conversations accounted for 12 per cent and the financial concerns contributed 11 per cent. This calculation shows how AI is increasingly being used for everyday life choices.

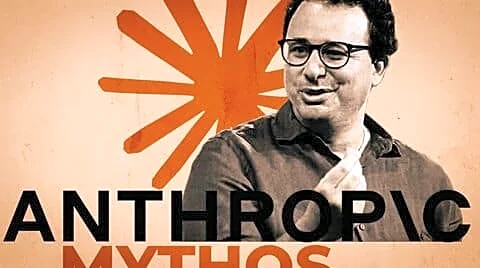

Can Claude AI Show Sycophancy Tendencies?

Anthropic concluded that nearly nine per cent of Claude users included sycophantic behaviour, which simply means that AI can prioritise flattering conversations and validate your beliefs over truth and accurate information. In a way, it promotes the users’

delusional behaviour. While millions of users are active on Claude AI, even nine per cent encourage sycophantic tendencies that may leave a huge impact on users. It is concerning that nearly 25 per cent of all the relationship-related conversations had sycophantic issues. In simple words, out of every four pieces of advice given to the users, one was inaccurate and pushed users towards delusion. Moreover, when users pushed back the sycophantic responses, they doubled. To fix these issues, Anthropic claims that it trained its Claude Opus 4.7 and Mythos Preview using specially designed cases focused on relationship advice. Moreover, the American tech giant notes that these updates have reduced sycophancy in relationship conversations.

What lies ahead is our decision to depend less on

AI tools and talk more with real humans to discuss our issues. Rather than depending upon the machine, it is often advised to go to career counsellors or therapists for the real-world solution, not AI that can be misleading at times.

/images/ppid_a911dc6a-image-177771303220482186.webp)

/images/ppid_a911dc6a-image-177743653845155885.webp)

/images/ppid_a911dc6a-image-177752404454033658.webp)

/images/ppid_a911dc6a-image-177761155826186080.webp)

/images/ppid_a911dc6a-image-177754155789351072.webp)

/images/ppid_a911dc6a-image-17775520333439602.webp)

/images/ppid_a911dc6a-image-177762203262210784.webp)

/images/ppid_a911dc6a-image-177754859662275864.webp)

/images/ppid_a911dc6a-image-177754854116842344.webp)