AI: The New Study Buddy?

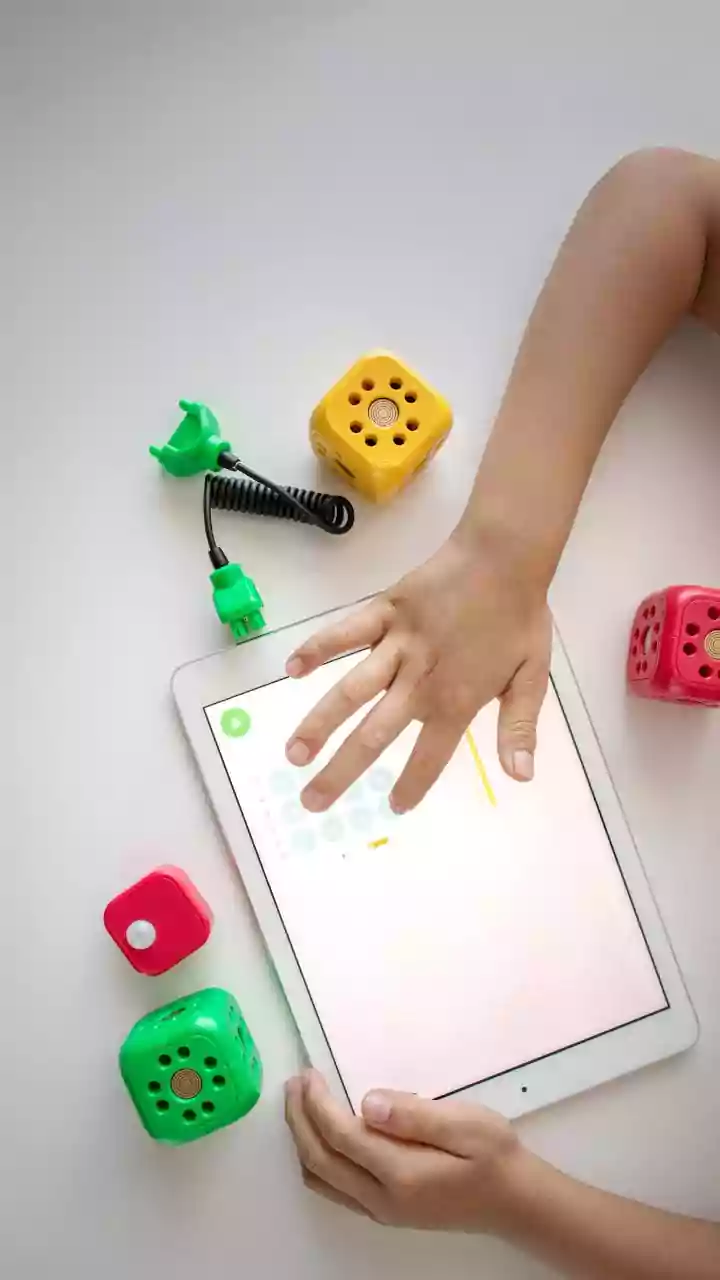

The rapid integration of Artificial Intelligence tools like ChatGPT into educational settings has created a new dynamic for students. Initially envisioned

as powerful aids to streamline research and idea generation, these platforms are increasingly becoming a crutch, leading to a potential over-dependence that could stifle genuine intellectual growth. The ease with which AI can produce content for assignments, from converting notes into narratives to drafting essays, presents a compelling shortcut. However, this convenience comes at a cost, raising alarms among experts about the long-term implications for young, developing minds. Students, eager to manage demanding schedules filled with academics, extracurriculars, and future aspirations, find the allure of AI irresistible. This can transform a helpful tool into a primary solution, causing students to delegate their thinking processes to algorithms, thereby diminishing their ability to engage in original thought and personal expression. The worry is that this pattern of cognitive offloading could fundamentally alter how students learn to analyze, synthesize, and create, impacting their intellectual autonomy.

Cognitive Offloading Concerns

The very nature of generative AI, marketed as an intelligent assistant, is now being scrutinized for its potential to erode fundamental cognitive abilities. Research, though preliminary, suggests a concerning trend: users who consistently delegate essay writing to AI may exhibit poorer neurological, linguistic, and behavioral performance compared to those who rely on traditional search engines or no tools at all. While the study's sample size was modest, the early release of its findings highlights a growing unease about the unfettered proliferation of large language models (LLMs) without adequate evaluation. Neurologists and educators alike are voicing concerns, particularly for younger demographics who are the most enthusiastic adopters of these technologies. The analogy of no longer needing to memorize phone numbers because devices store them is a potent illustration of how readily the brain may cease developing certain skills if external tools consistently perform the task. This 'cognitive offloading,' where mental effort is delegated to external sources, can lead to a decline in the capacity for focused attention, deep analysis, reasoned reflection, and critical questioning—skills considered integral to the human experience and the development of a well-rounded intellect.

Echoes of Social Media's Impact

While the widespread adoption of LLMs is a recent phenomenon, the impact of social media on young minds offers a cautionary tale of similar digital influences. Psychologists have observed a consistent pattern where constant digital distractions fostered by social media can lead to inattention symptoms and a reduced ability to engage deeply with tasks like reading or homework. Social media platforms, by design, often reward quick, superficial engagement with bite-sized content, promoting immediate emotional responses over nuanced analysis. This environment can foster a culture of skimming rather than deep comprehension, potentially contributing to attention deficits and affecting memory. Given the symbiotic relationship between cognitive skills and mental health, the erosion of one can invariably impact the other. When cognitive skills falter, the brain's ability to manage stress and emotions effectively diminishes, potentially leading to poor decision-making and a decline in overall well-being. This parallel underscores the potential for AI, if not managed thoughtfully, to amplify existing challenges related to digital media consumption.

Navigating AI in Education

The educational landscape is actively adapting to AI, with initiatives aimed at integrating these tools responsibly. Organizations are developing frameworks and programs to equip educators and students with the necessary skills to evaluate and utilize AI effectively and ethically. For instance, AI's potential for personalized learning, providing clearer explanations and tailored feedback, is undeniable. However, the implementation of AI in classrooms necessitates robust protocols to address ethical use and privacy concerns. Furthermore, equitable access remains a significant challenge, as a heavy reliance on digital tools could disadvantage students in areas with limited digital infrastructure. There's also a risk of perpetuating existing biases if AI models are developed without diverse perspectives, potentially reinforcing stereotypes among marginalized communities. Therefore, a balanced approach is crucial, one that leverages AI's benefits while actively mitigating its risks to ensure it serves as a genuine tool for enhancement rather than a substitute for foundational learning and critical thought.