Anticipating Massive Demand

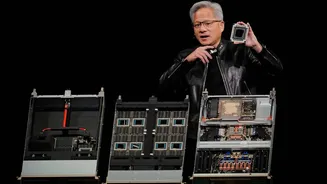

Nvidia's leadership is forecasting an unprecedented surge in demand for its next-generation AI hardware. Specifically, the company anticipates that its Blackwell

and Rubin AI systems will generate at least $1 trillion in demand through the year 2027. This represents a significant upward revision from earlier projections, which estimated about $500 billion in demand through 2026. This substantial increase highlights the accelerating adoption and expanding applications of advanced AI technologies across various industries, indicating a robust growth trajectory for AI chip markets. The company's confidence stems from the rapidly evolving AI landscape and the increasing computational needs for both training and deploying sophisticated AI models, signaling a period of sustained innovation and market expansion driven by these powerful systems.

Innovating Inference Technology

The company has unveiled a significant advancement in AI inference technology with the introduction of the Nvidia Groq 3 LPX. This new system is designed to dramatically accelerate inference workloads, achieving speeds up to 35 times faster than previous solutions. A key component of this innovation is the integration of technology licensed from the AI chip startup Groq, alongside Nvidia's own Vera Rubin architecture. This collaboration, formalized through a substantial $20 billion deal, allows Nvidia to leverage Groq's specialized expertise. Samsung is slated to manufacture these advanced chips, with the Nvidia Groq 3 LPX system expected to commence shipping in the latter half of the current year, marking a crucial step in making AI decision-making processes more efficient and accessible.

Inference: The New Frontier

The launch of this new inference system underscores a pivotal shift in the AI industry, with inference emerging as the next major battleground. Inference, the process by which AI models generate predictions or decisions based on new data, is becoming increasingly critical as AI applications become more widespread. While Nvidia's powerful GPUs are capable of both training and inference, a growing number of competitors, including major tech firms and emerging chip startups, are focusing on developing specialized, more cost-effective solutions specifically for inference tasks. This trend is amplified by the rise of AI agents, which automate tasks for users, potentially leading to a massive increase in the demand for efficient inference processing. This strategic move positions Nvidia to maintain its leadership in a segment that is rapidly gaining prominence.