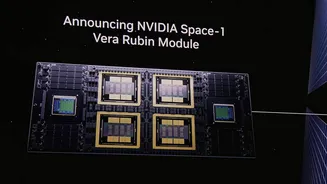

A Unified Compute System

Nvidia's Vera Rubin platform exemplifies a profound shift in computing architecture, moving beyond individual component excellence to a fully integrated

system. This groundbreaking platform comprises seven specialized chips, each meticulously designed for distinct functions, all housed within five rack-scale systems. The lineup includes the Vera Rubin GPU, optimized for massive AI training and inference tasks, and the Vera CPU, managing workload distribution and agentic operations. Complementing these are specialized chips like the Groq 3 LPU for ultra-fast, low-latency inference, alongside crucial interconnect components: NVLink 6 switches for high-speed communication, ConnectX-9 NICs for networking, BlueField-4 DPUs for data processing, and Spectrum-6 Ethernet for broader connectivity. The strength of this integrated design lies in its synergy; substituting any single component disrupts the finely tuned performance gains achieved through co-design. Migrating to an alternative system necessitates substantial re-engineering of existing workloads and retraining of technical staff, effectively locking customers into the ecosystem due to the substantial switching costs and the loss of specific performance advantages derived from this unified approach. This strategy aligns with transaction cost economics, which posits that vertical integration is most effective when assets possess high specificity, meaning their value is maximized within a particular context rather than in alternative uses. Jensen Huang articulated this vision clearly, emphasizing that Vera Rubin is conceived as a single, end-to-end optimized system, comprehensively integrated with its software.

Software and Value Chain Synergy

The competitive edge Nvidia has cultivated extends significantly through its software layer and the intricate coordination of its value chain. According to Porter's value chain framework, sustained competitive advantage arises not from isolated activities but from the efficient interplay between them. Nvidia's architecture amplifies this through its software ecosystem, exemplified by its inference orchestration layer, which efficiently directs tasks between specialized hardware. For instance, prefill tasks are routed to Vera Rubin GPUs while decode operations are handled by Groq LPUs, a parallel processing approach that significantly boosts performance at premium inference tiers. This sophisticated orchestration is built upon a foundation of two decades of developer investment in CUDA. By 2026, CUDA's reach has expanded to various architectures, and its developer community experienced substantial growth between 2020 and 2025, cementing Nvidia's dominance. A vendor offering standalone GPUs in a diverse computing environment cannot replicate the performance uplifts that stem from tightly coupled hardware, interconnects, and orchestration software operating as a cohesive unit. Furthermore, the extensive developer tooling, libraries, and widespread familiarity built around CUDA over decades create an ecosystem gap that is exceptionally difficult and time-consuming for competitors to bridge.

Platform Economics for Investors

From an investment perspective, Nvidia's strategy underscores a shift towards valuing platform economics over the traditional focus on individual chip cycles. The Vera Rubin NVL72 system, for example, offers a remarkable 10x improvement in inference throughput per watt and a tenfold reduction in cost per token compared to the Grace Blackwell system. When combined with Groq, the throughput at premium inference tiers escalates to an impressive 35x improvement. In data centers where power capacity is a critical constraint, the tokens-per-watt metric directly influences infrastructure revenue potential, a metric where Nvidia's platform now leads decisively. This pattern of durable returns in platform markets typically benefits companies that establish their infrastructure as the essential operating environment for their surrounding ecosystem. Enterprises making multiyear financial commitments to platforms like Vera Rubin are essentially validating this dominant market position. For valuation models, this distinction is crucial. Models relying solely on chip cycle assumptions tend to underestimate the compounding value generated by a robust ecosystem. The transition to vertically integrated systems necessitates a reevaluation of valuation methodologies, moving from linear hardware replacement cycles to perpetual infrastructure annuity models in Discounted Cash Flow analyses. The success of payment networks like Visa offers a parallel: their strength lies in the transaction network, the developer infrastructure built upon it, and the prohibitive cost for participants to recreate it. Nvidia's CUDA ecosystem, comprehensive software stack, and integrated hardware operate on a similar principle. Key performance indicators for investors should include the expansion of the developer base, the integration of inference workloads with CUDA, and the rate at which enterprises are committing fixed capital expenditure to the platform. After twenty years of development, this ecosystem has indeed become Nvidia's most significant asset.