Shifting AI Landscape

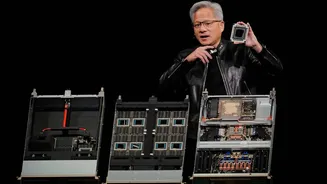

For years, Nvidia enjoyed unparalleled success with a universal chip design, supported by its powerful CUDA software, making its GPUs the de facto standard

for AI development. This strategy propelled the company to immense valuation and market share, with over 90% control of the AI accelerator market and impressive profit margins. However, the dynamic in AI hardware is undergoing a fundamental transformation, compelling even Nvidia's CEO, Jensen Huang, to acknowledge the limitations of this approach. The emergence of a new chip specifically designed for inference tasks, potentially stemming from a significant acquisition, marks a clear departure from the company's previous broad-spectrum focus and indicates an adaptation to evolving market demands and competitive pressures.

Rivals' Strategic Push

A noticeable trend is the growing exploration of alternative solutions by Nvidia's former clientele. Tech behemoths like Google, Microsoft, Amazon, and Meta have all recently introduced their own AI-specific chips. These custom-designed alternatives are not only rigorously benchmarked against Nvidia's offerings but are also prominently marketed for their superior cost-effectiveness at large-scale deployment. This competitive maneuvering suggests a concerted effort by these companies to gain an advantage in the burgeoning AI inference market, directly challenging Nvidia's established position and potentially eroding its market share.

Inference Economics Evolve

The bedrock of Nvidia's previous dominance was its proprietary CUDA ecosystem, which fostered strong developer loyalty and hardware dependency. However, as AI applications increasingly emphasize inference – the process of running trained models – the economic equation begins to favor specialized hardware. Analysts predict that by 2030, inference will constitute a substantial 75% of AI data center expenditures, a significant increase from its current proportion. Purpose-built chips from Google, Microsoft, Amazon, and Meta are engineered precisely for these inference workloads, offering a demonstrably lower cost of operation at scale. For instance, Google's specialized processing units are reported to provide a total cost of ownership that is substantially lower than comparable high-end Nvidia server solutions. Similarly, advancements in chip design by Microsoft aim for enhanced performance per dollar, explicitly outperforming Nvidia's previous generation hardware in key AI tasks. Meta has also been aggressively developing its own internal AI chips, with a commitment to releasing new generations at a rapid pace, further intensifying the competition.

Market Reaction to Competition

The financial markets have already begun to reflect the evolving competitive landscape. When news broke that a major client, planning billions in AI infrastructure investment, was considering alternatives from a rival for its data centers, Nvidia experienced a substantial single-session stock value decline, erasing hundreds of billions in market capitalization. Conversely, the stock prices of companies developing these competing technologies saw notable increases. Nvidia's public response to these developments has been notably defensive, highlighting its platform's versatility across all AI models and computing environments. While technically accurate, this assertion is increasingly being overshadowed by the paramount importance of cost-efficiency and specialized performance for specific AI workloads, a factor where rivals are demonstrating significant advantages.

Future of AI Hardware

The new wave of AI hardware development is leaning towards purpose-built solutions. Technologies that utilize different memory architectures, such as SRAM, offer a way to circumvent bottlenecks associated with the high-bandwidth memory that powers many of Nvidia's leading chips, especially given current supply chain constraints for such components. While the overall AI chip market is expanding rapidly, creating room for multiple successful vendors, Nvidia's historical pricing power is undeniably under threat. By signaling an acknowledgment of the need for dedicated inference hardware, the company's leadership has effectively conceded a key argument long advocated by its competitors, indicating a significant shift in the competitive dynamics of the AI hardware industry.