End of an Era

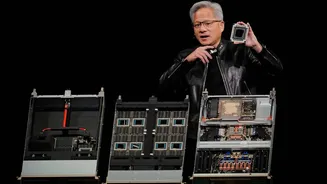

For an extended period, Nvidia carved out an empire, valued at an astonishing $4.5 trillion, by adhering to a straightforward philosophy: a single chip

designed to handle every artificial intelligence task, deployable across diverse environments. This approach proved remarkably successful. The proprietary CUDA software ecosystem effectively cemented developer loyalty, while Nvidia's Graphics Processing Units (GPUs) became the indispensable foundation of the AI revolution, leaving competitors struggling to gain traction. The company enjoyed a commanding position, capturing over 90% of the AI accelerator market, boasting impressive gross margins of 75%, and witnessing its stock price soar to unprecedented heights, making it the most valuable company globally. However, the dynamic landscape of the AI hardware market is undergoing significant transformations that Nvidia can no longer afford to overlook. The decision by CEO Jensen Huang to introduce a novel chip specifically engineered for inference tasks at the upcoming GTC developer conference—marking the debut of technology acquired through a substantial $20 billion Groq deal finalized in December—serves as a potent indicator that even he recognizes the limitations of the company's established strategy.

The Inference Shift

A clear catalyst is evident: clients are quietly exploring alternative solutions, significant market value is being erased in single trading sessions, and the very companies that once eagerly procured Nvidia's GPUs are now actively developing their own sophisticated alternatives. Giants like Google, Microsoft, Amazon, and Meta have all unveiled custom-designed AI chips in recent months. Each of these new offerings has been explicitly benchmarked against Nvidia's products and presented as a more economical option for large-scale deployment. This signifies a fundamental change in how AI computation is being approached, moving beyond a one-size-fits-all model towards specialized, cost-optimized solutions. The economic calculus for running AI models, particularly for inference, is becoming a paramount concern, prompting these major technology players to invest heavily in in-house silicon development.

Competition Heats Up

The foundational element of Nvidia's market leadership has consistently been CUDA, its proprietary software suite that creates a strong dependency between developers and its hardware. Nevertheless, as AI workloads increasingly gravitate towards inference—the process of deploying trained models—the economic advantages are beginning to favor alternatives to Nvidia. Analysts from Bank of America project that inference will constitute 75% of AI data center expenditures by 2030, a substantial increase from approximately 50% observed last year. Specialized chips from Google, Microsoft, Amazon, and now Meta are precisely engineered for this growing demand, offering significantly lower operational costs at scale. For instance, Google's Ironwood Tensor Processing Unit (TPU) reportedly achieves a total cost of ownership that is approximately 30-44% less than that of Nvidia's equivalent GB200 Blackwell server. Microsoft's recently announced Maia 200, manufactured using TSMC's advanced 3nm process, claims a 30% improvement in performance per dollar compared to its predecessor and explicitly outperforms Nvidia's seventh-generation TPU on FP8 tasks. Concurrently, Meta has introduced four new in-house MTIA chips, with plans for a new generation to be released approximately every six months.

Market Reacts Swiftly

The financial markets are already reflecting this significant industry shift. When reports surfaced indicating that Meta, a major Nvidia customer poised to invest up to $72 billion in AI infrastructure this year, was evaluating Google's TPUs for its data centers, Nvidia's stock experienced a sharp decline of over 6% in a single trading session, resulting in an approximate loss of $250 billion in market valuation. In contrast, Alphabet, Google's parent company, saw its stock climb by 4%. Broadcom, a key manufacturer of Google's chips, experienced an 11% surge. Nvidia's public reaction was notably defensive. The company issued a statement on X, asserting that 'Nvidia is a generation ahead of the industry—it's the only platform that runs every AI model and does it everywhere computing is done.' While this statement is factually accurate, the critical factor is evolving from merely 'running every model' to 'running the most relevant models efficiently and affordably.' The market's focus is clearly shifting towards economic viability and specialized performance.

Future Hardware Landscape

The Financial Times highlights that Groq's Language Processing Unit (LPU), now being integrated into Nvidia's product offerings, utilizes Static Random-Access Memory (SRAM) rather than the high-bandwidth memory (HBM) that powers Nvidia's premium chips. HBM is currently experiencing a supply shortage, with manufacturers like SK Hynix and Micron struggling to meet escalating demand. A chip based on Groq's architecture bypasses this critical bottleneck entirely. Despite these challenges, Nvidia is not conceding defeat. Industry analysis firm SemiAnalysis posits that Google, Amazon, and Nvidia will all achieve substantial chip sales in the future, given the rapid expansion of the market which allows for multiple successful players. However, Nvidia's historical advantage in pricing power is undeniably under threat. By acknowledging the necessity for dedicated hardware specifically for inference, Jensen Huang has effectively confirmed the arguments that rivals have been making for years regarding the limitations of a singular, all-encompassing chip design.