A New Chip Era

Nvidia's reign as the king of AI accelerators, built on the premise of a single, versatile chip powered by its CUDA software, is facing unprecedented disruption.

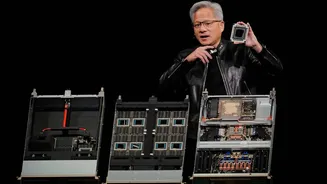

For years, this approach yielded immense success, establishing Nvidia's GPUs as the backbone of the AI revolution and securing a market share exceeding 90% with impressive profit margins. However, the burgeoning AI field is rapidly evolving, particularly in the crucial area of inference – the process of running AI models. As this becomes more cost-sensitive, major technology players like Google, Microsoft, and Meta are developing their own tailored AI chips. This strategic pivot, exemplified by Jensen Huang's anticipated introduction of a new inference-focused chip, signals a clear acknowledgement that Nvidia's established strategy might no longer be sufficient in the face of these emerging, specialized alternatives designed for greater efficiency and lower operational costs.

Challengers Emerge

The AI hardware market is undergoing a profound transformation, compelling even industry titans to re-evaluate their strategies. Customers, once loyal Nvidia adopters, are now actively exploring alternatives due to the escalating importance and cost considerations of AI inference. Giants like Google, Microsoft, and Amazon have publicly announced their own bespoke AI chips, explicitly designed and benchmarked to compete with Nvidia's offerings, emphasizing cost-effectiveness for large-scale deployments. Meta has also joined this movement, revealing multiple in-house AI chip designs that are slated for regular updates, approximately every six months. This intense competition is already impacting Nvidia's market standing, as evidenced by significant stock value fluctuations following reports of major clients considering alternative chip solutions.

The Inference Equation

The core of Nvidia's prior market dominance was deeply intertwined with its proprietary CUDA software ecosystem, which fostered strong developer loyalty. However, as AI workloads increasingly gravitate towards inference, the economic calculus begins to shift unfavorably for Nvidia. Projections indicate that by 2030, inference will constitute a substantial 75% of AI data center expenditures, a significant increase from its current roughly 50% share. Purpose-built chips from companies like Google, Microsoft, and Meta are meticulously engineered for this specific function, offering considerably lower running costs at scale. For instance, Google's Ironwood TPU is reportedly achieving a total cost of ownership that is 30-44% less than Nvidia's comparable GB200 Blackwell server. Similarly, Microsoft’s new Maia 200 chip is designed for enhanced performance per dollar and explicitly claims superiority over Nvidia’s previous generation on certain tasks.

Market Reaction

The financial markets are keenly observing these shifts, with Nvidia's stock valuation showing sensitivity to competitive developments. A notable instance occurred when reports surfaced that Meta, a substantial Nvidia client planning extensive AI infrastructure investments, was evaluating Google's TPUs for its data centers. This news triggered a sharp decline in Nvidia's stock, erasing approximately $250 billion in market capitalization within a single trading session, while competitors like Alphabet saw gains. Nvidia's public response emphasized its position as offering a universally compatible platform for all AI models. While technically accurate, this claim is increasingly being overshadowed by the growing demand for specialized hardware that can efficiently execute specific models at a lower cost. The market's reaction underscores a growing sentiment that while breadth of capability is valuable, cost-efficiency in the dominant inference workloads is becoming paramount for large-scale AI deployments.

Rethinking Hardware

The evolving landscape highlights a growing preference for specialized AI hardware solutions. The LPU (Language Processing Unit) technology, now being integrated into Nvidia's product offerings following an acquisition, utilizes SRAM, a departure from the high-bandwidth memory (HBM) found in Nvidia's premium chips. HBM availability is a growing concern, with manufacturers like SK Hynix and Micron struggling to meet escalating demand. By incorporating Groq’s architecture, Nvidia aims to circumvent this bottleneck. Despite these challenges, the future AI chip market is expected to accommodate multiple successful players, including Google, Amazon, and Nvidia, due to the sector's rapid growth. However, Nvidia's pricing power, a historical advantage, is demonstrably under pressure. Jensen Huang's acknowledgment of the need for dedicated inference hardware effectively validates the arguments long put forth by its rivals, signaling a significant strategic adaptation.