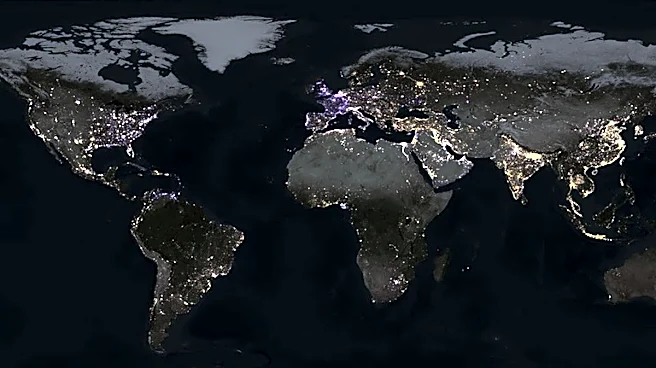

The global artificial intelligence race has hit an unexpected physical hurdle: a shortage of transformers, switchgear, and electricity. In the United States, nearly half of the data centre projects planned for 2026 are currently facing delays or cancellations. While Silicon Valley has the capital and the code, it is increasingly struggling with the “hardware of power”. This bottleneck is not just slowing down the deployment of new AI models; it is fundamentally altering the global infrastructure map, with India emerging as a primary beneficiary.

Why are US data centre projects stalling?

The primary cause of the delay is a critical shortage of electrical infrastructure. Despite massive capital expenditure commitments from tech giants—expected to exceed $650 billion in 2026—funding cannot

buy time. Lead times for high-power transformers in the US have ballooned from two years to nearly five years. Because AI data centres require massive, consistent power loads, they are competing with the broader “electrification” of the American economy, including electric vehicles and heat pumps, for the same limited supply of components.

Furthermore, a significant portion of the electrical supply chain remains dependent on imports, particularly from China. Geopolitical tensions and trade barriers have made it difficult for US firms to “shore up” their domestic manufacturing quickly enough to meet the 18-month deployment cycles required for AI. This has created a “power gap” where ambitious projects like OpenAI’s “Stargate” face logistical hurdles that money alone cannot solve.

How do these delays impact AI investment returns?

For investors, the math is becoming increasingly stressful. The data centre sector is currently in a $3 trillion investment supercycle, but returns depend on “speed to market”. When a facility is delayed by two years, the underlying GPUs (Graphics Processing Units) inside it can become nearly obsolete before they even go live. This “hardware depreciation” without active revenue generation is a significant drag on returns on investment (ROI).

As construction costs rise—approaching $11.3 million per megawatt in 2026—companies are being forced to pivot. Instead of waiting for massive “gigawatt-scale” campuses to plug into overstrained US grids, many firms are now looking for “distributed capacity” in regions where the grid is more flexible or the regulatory path is faster. This is where the global AI focal point is beginning to shift.

What does the US power crunch mean for India?

For India, the US power crisis represents a historic “sovereign opportunity”. As Western projects stall, global hyperscalers are rerouting investments toward the Indian market, which offers significantly lower construction costs—roughly $6 million to $7 million per megawatt. India is currently in its largest-ever expansion cycle, with nearly 3.5 GW of capacity planned across hubs like Mumbai, Hyderabad, and Chennai.

The Indian government has doubled down on this advantage in Budget 2026, offering a long-term income tax holiday until 2047 for foreign cloud providers using India-based data centres. By positioning itself as a “safe harbour” for AI infrastructure, India is not just serving its domestic market but is becoming a global back-end for international AI inference. While India still faces its own “execution risks” regarding cooling technology and grid stability, its ability to commission capacity faster than the US is making it the new “command centre” for the next phase of the AI revolution.

/images/ppid_a911dc6a-image-177650902738845947.webp)

/images/ppid_a911dc6a-image-177642856529377769.webp)