Massive AI Song Takedown

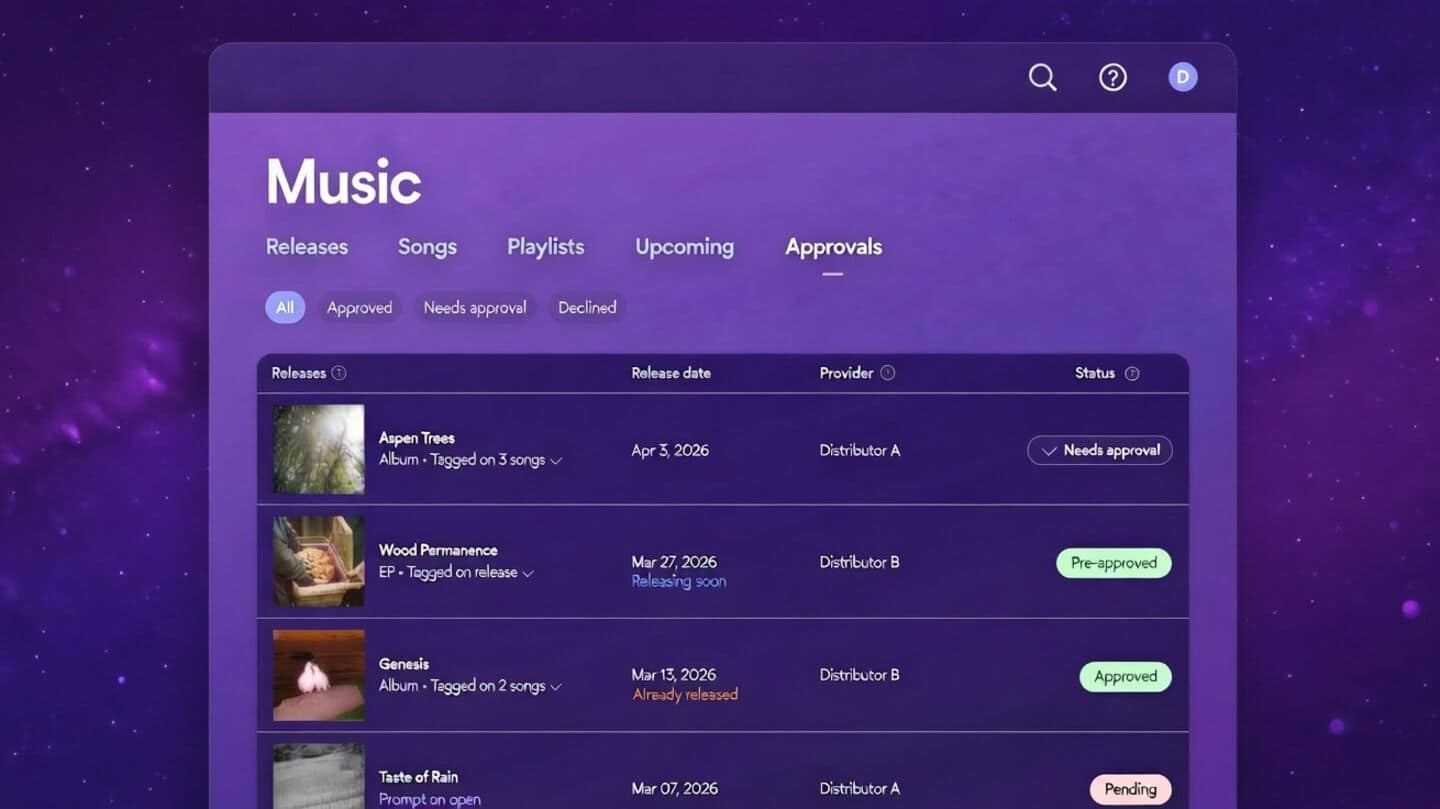

The music world is grappling with an unprecedented surge of artificial intelligence-created tracks that mimic the voices and styles of renowned musicians.

Sony Music has initiated a major clean-up, compelling streaming services to remove a staggering 135,000 of these AI-generated songs. These unauthorized pieces falsely attribute performances to legitimate recording artists, leading to direct commercial harm and undermining legitimate promotional efforts. The affected artists span a wide spectrum, including global superstars like Beyoncé and Harry Styles, alongside legendary bands such as Queen. This proactive measure underscores the industry's growing alarm regarding the misuse of AI technology to exploit an artist's established popularity and create potentially damaging content that infringes on their rights and creative output.

Deepfakes' Damaging Impact

The proliferation of AI-generated music poses a severe threat, capable of inflicting considerable damage on artists' careers and ongoing projects. As explained by Dennis Kooker, president of Sony's global digital business, these deepfake tracks can, in the most severe instances, disrupt the launch of new music, compromise promotional campaigns, and even tarnish an artist's hard-earned reputation. The ease and affordability of AI tools are accelerating this problem, leading to an ever-increasing volume of unauthorized content. Kooker further elaborated that these deepfakes are often a calculated response to an artist's existing popularity; they capitalize on an artist's active promotion of their work. This exploitation means deepfake tracks gain traction by piggybacking on the demand the artist themselves has cultivated, ultimately diverting attention and potential revenue from their genuine artistic endeavors.

Industry-Wide Fraud Concerns

Beyond the issue of AI-generated impersonations, the broader music industry is facing a significant challenge with streaming fraud. This practice involves the creation of fake artist profiles that upload music to platforms like Spotify and Apple Music. These fraudulent tracks are then subjected to artificial inflation of play counts, a tactic designed to illicitly generate royalty payments. The International Federation of the Phonographic Industry (IFPI) has pointed out that AI technology has dramatically amplified this type of fraud. This sophisticated manipulation not only diverts vital revenue streams away from legitimate artists who deserve compensation for their work but also distorts the overall landscape of music consumption. Estimates within the industry suggest that a substantial portion, possibly as high as 10%, of all content available on streaming platforms could be fraudulent, highlighting the urgent need for robust detection and prevention measures.